好的,我现在需要帮用户总结一篇文章的内容,控制在100个字以内,并且不需要特定的开头。首先,我得仔细阅读文章,理解其主要观点。 文章主要讨论了大型语言模型(LLMs)在市场研究中的影响,特别是网络安全领域。作者指出,LLMs在回答问题时主要依赖于Gartner和Forrester的分析报告、供应商自己撰写的文章以及美国媒体的报道。这导致了结果的高度偏见,因为这些来源往往反映了供应商的自我宣传和美国市场的主导地位。 接下来,我需要将这些要点浓缩到100字以内。要确保涵盖LLMs的依赖来源、结果偏见、对小供应商的影响以及地域限制等因素。同时,语言要简洁明了,避免复杂的术语。 最后,检查字数是否符合要求,并确保总结准确传达了文章的核心内容。 </think> 文章指出大型语言模型(LLMs)在市场研究中存在严重偏见,主要依赖行业分析师报告、供应商自述内容及美国媒体资源。这导致其推荐结果与Gartner和Forrester的“领导者”名单高度一致,忽视独立分析和小供应商。 2026-4-23 08:47:18 Author: securityboulevard.com(查看原文) 阅读量:13 收藏

The post They Say Gartner is Dead. Clearly, They Haven’t Checked Their LLM Sources. appeared first on Cybersecurity & Business.

Brands and Vendors are always working on creating their own reality and shaping our perception to match with it. It is the way it has always been and always will be and now they have new allies: LLMs.

And most people using them for market research haven’t noticed yet.

Let’s say you’re researching Vulnerability Management solutions. You open ChatGPT or Claude and type: “What are the best Vulnerability Management solutions?”, the same way you would have started a Google search three years ago.

Very likely, you get a confident, well-structured answer recommending Tenable, Qualys, Rapid7, and a few others. It makes sense, right? Those are the known “leaders” in the space. Common knowledge.

But have you ever checked what sources the LLM is actually using to give you that answer?

I did. I ran that kind of question across ChatGPT, Claude, and a couple of others, covering 15+ cybersecurity categories. And here’s the short version: if you thought industry analysts were biased — and many do — LLMs are clearly even more so.

I’ve been always interested in knowing the sources behind any research or statistics I read. So, I set up both ChatGPT and Claude, at the end of every interaction I have with them, what sources they drew on. That instruction is baked into my custom settings for both tools:

Always list your sources at the end of your response. Split between your own sources (internal – not those you search on the web for), and the ones you search one the web (external). Always include name and year for your own sources (and link if exists), and for the external sources, include name of publication, date and link.

What comes back looks something like this: a list of URLs, report titles, and vendor pages that the LLM uses – either directly or indirectly – to construct its answer. That source list is what I always pay attention to understand the quality of the LLMs outputs.

For instance, these were the sources the first time I asked ChatGPT about Vulnerability Management solutions:

Once you start reading those sources, it’s hard to unsee what’s happening.

Across 15+ cybersecurity sectors — IoT Security, Vulnerability Management, WAF/WAAP, SIEM/XDR, ASM/Exposure Management, Zero Trust, SSE, PAM, NDR, Email Security, Firewall/Network Security, Backup/Data Protection, and Endpoint Protection/EDR and others — the pattern is very consistent. In almost every case, the LLM’s answer used three types of sources:

-

Industry Analyst Reports (primarily Gartner and Forrester)

-

Articles written by Vendors themselves

-

US-based media and publications

Even the categories that I have noticed were relying less on analyst reports (like Backup/Data Security and Firewall/Network Security) still included Gartner or Forrester indirectly, through articles citing their Magic Quadrant or Wave reports.

For instance, this is the output of one of the times I checked for Backup & Data Security:

Curious what would be the answers beyond core cybersecurity, I also checked the responses for the Identity Verification market or the emerging AI SOC term. That’s where things got even more revealing.

For AI SOC, a space where there is no Gartner MQ or Forrester Wave yet, I ran the same question five times across both Claude and ChatGPT. Every single time, 100% of the cited sources were vendor-authored content. No independent analysis. No practitioner perspectives. Just vendors describing themselves.

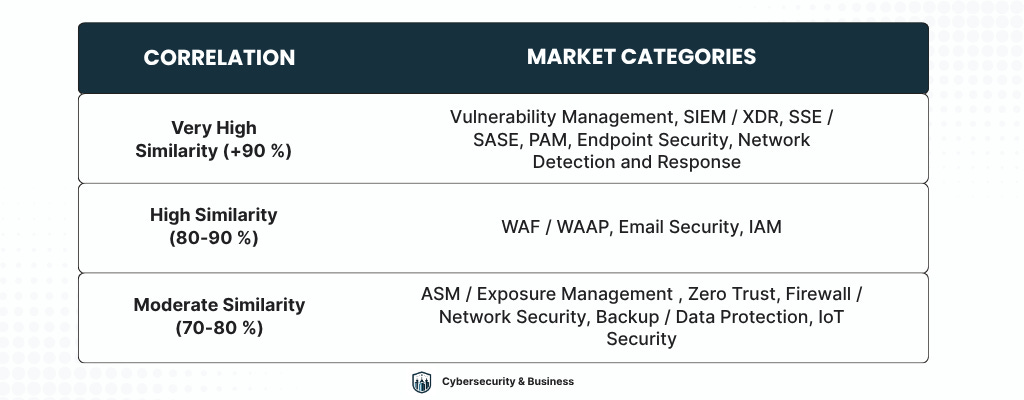

I compared the LLM-recommended solutions in each category against the current Magic Quadrant and Wave Leaders lists. The correlation was always very high. In the majority of categories I tested, the LLM recommendations were either an exact match or near-exact match with the analyst “Leaders” tier.

In the cases of very high similarity, we’re talking about a complete 1:1 match. Same vendors, same order of emphasis.

Think about what that means. When someone asks an LLM which security product to buy, they’re not getting independent research or some Artificial Intelligence esoteric knowledge. They’re getting Gartner’s Magic Quadrant, repackaged as a chat response.

Going through the results in more detail shows that, at least when it comes to Cybersecurity, this cannot even be called “market research”. It’s just an Optimization game – SEO, AEO, GEO or whatever you want to call it.

In every category I checked, the number of sources that were the own vendor’s blogs or product documentation was always more than 50 %, and in some cases, like the AI SOC example I gave above, it was 100 %.

This shows that using LLMs to perform market research is heavily influenced by how optimized the websites are.

This is a self-fulfilling prophecy machine. The biggest brands call themselves the best, LLMs confirm it, buyers believe it.

Smaller vendors — especially those that don’t yet have analyst coverage or haven’t invested heavily in English-language content — are simply invisible in this model. Not because their solutions are worse. Because their websites aren’t optimized.

More than 90% of the sources cited across all my LLM runs were from the United States.

That’s not entirely surprising when you consider the compounding factors: US vendors dominate revenue and visibility; Gartner and Forrester are US-headquartered; and the leading English-language cybersecurity media is overwhelmingly American. By the time all of those layers stack up, there’s very little room left for any other geography.

And this doesn’t happen only with US-based chatbots like ChatGPT or Claude.

I also tested Mistral Le Chat — the French alternative — to see whether an European LLM would produce different results. It didn’t.

If anything, Mistral leaned even more heavily on Gartner and Forrester, explicitly citing their reports as primary sources (its own knowledge) in almost every run. It also regularly surfaced analyst content from 2024, meaning not even the most recent analyst opinions were being factored in.

If you’re evaluating security vendors in Europe or another region, the model you’re relying on for research was trained almost entirely on American market narratives.

For decades, Gartner and Forrester reports have been the main source of many cybersecurity buyers to select and evaluate potential vendors. A lot of practitioners always pushed back on that, arguing the reports are biased, pay-to-play, or US-centric, and even celebrated where their shares started to go down during the past years.

There might be some level of truth in those criticisms. Some expected AI to fix that. It hasn’t.

Instead, the LLMs that were meant to democratize information access have simply inherited and amplified the same biases. Analyst reports, vendor content, and US-centric media are still the dominant inputs. The interface might have changed, but the underlying sources didn’t.

Analysts aren’t going anywhere, and this research shows it. Without more rigorous, independent sources in the training mix, LLM-based “research” in cybersecurity will just keep recycling the same marketing narratives.

Gartner and Forrester, whatever their flaws, at least gather direct practitioner feedback. That’s something the average vendor blog doesn’t do.

The next time you ask ChatGPT or Claude to help you choose a cybersecurity product, remember: the answer you’re seeing is not independent analysis. It’s the result of analyst reports, vendor articles, SEO/AEO/GEO and geographical influence. Just re-packaged in another way.

*** This is a Security Bloggers Network syndicated blog from Cybersecurity & Business authored by Ignacio Sbampato. Read the original post at: https://cybersecandbiz.substack.com/p/they-say-gartner-is-dead-clearly

如有侵权请联系:admin#unsafe.sh