嗯,用户让我用中文总结一下这篇文章,控制在一百个字以内。首先,我需要仔细阅读文章内容,抓住主要观点。 文章主要讲的是AI代理对企业安全构成的威胁。研究显示,97%的企业安全领导者预计未来一年内会发生AI驱动的安全或欺诈事件。但目前只有6%的安全预算用于应对这种风险。这说明企业虽然认识到了风险,但准备不足。 接下来,我需要找出关键点:AI代理的威胁、企业的认识与准备之间的差距、三个主要漏洞(检测、归属、治理)、以及企业应该如何应对。 现在,我要把这些内容浓缩到100字以内。确保涵盖主要威胁、企业认知、准备不足以及应对措施。 可能的结构是:首先点明AI代理带来的安全威胁,然后指出企业认知与准备之间的差距,接着提到三个主要问题,最后提到企业正在采取的措施。 检查字数是否符合要求,确保语言简洁明了。 </think> 文章指出,AI代理已进入企业系统,可能引发安全或欺诈事件。尽管97%的企业领导者预计未来一年内将发生此类事件,但仅有6%的安全预算用于应对这一风险。企业面临检测、归属和治理三大漏洞,需加强非人类身份管理、提升自动化决策链的可见性及增强归属能力以应对AI驱动的安全威胁。 2026-4-2 18:8:27 Author: securityboulevard.com(查看原文) 阅读量:4 收藏

Is Your Business Ready?

The threat is no longer hypothetical. AI agents – autonomous systems capable of planning, reasoning and acting across digital environments — are already operating inside enterprise systems. They’re retrieving data, triggering transactions, and interacting across services through legitimate credentials and approved workflows. According to new research from Arkose Labs, nearly every enterprise security leader knows what comes next.

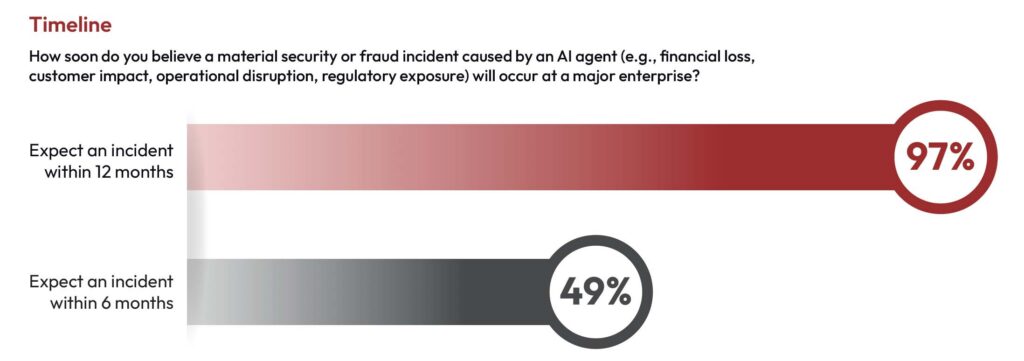

In our 2026 Agentic AI Security Report, based on a global survey of 300 enterprise leaders across security, fraud, identity and AI functions, 97% of respondents expect a material AI-agent-driven security or fraud incident within the next 12 months. Nearly half expect one within six months. Yet only 6% of security budgets are currently allocated to this risk.

It’s not hard to see how we got here. The productivity and competitive gains from agentic AI are real, and enterprises moved quickly to capture them often before the identity, security and governance frameworks needed to manage autonomous systems were in place. The technology outran the controls. This is not negligence; it’s the nature of a category that moved faster than any prior wave of enterprise technology adoption.

The threat is understood. The preparation is not.

The Gap Between Knowing and Being Ready

“In the rush to benefit from the amazing productivity and efficiency gains that agentic AI represents and in keeping pace with competitors, many companies deployed it broadly before fully considering the identity, security and governance issues involved,” said Frank Teruel, Chief Operating Officer of Arkose Labs. “Not only do enterprises need to distinguish between malicious and authorized agents, they need the visibility and attribution capabilities to know what those agents are doing once they’re inside.”

There’s a striking contradiction running through the data. Enterprise leaders broadly recognize agentic AI as a near-term security risk, yet investment and governance have not kept pace with that recognition.

On average, organizations allocate just 6% of their security budgets to AI-agent risk. One in ten doesn’t track AI-agent risk separately at all. Over half report having no formal AI-agent governance controls in place today.

This is the acceleration window: a compressed period where agentic AI deployment is outrunning the controls required to manage it. Organizations that close this gap now will be significantly better positioned when the first major incident hits. Those that don’t will be responding to something they saw coming.

Three Gaps That Define Enterprise Exposure

Our research surfaces three operational vulnerabilities that run across enterprise functions and geographies.

The Detection Illusion

Most security teams, more than 70%, are not confident their tools will scale as AI-driven attacks continue to evolve — a warning sign that today’s adequacy will likely not hold tomorrow.

Respondents cited model drift, adaptive bypass techniques and fragmented signals across systems as reasons detection may become harder as autonomous systems mature. The tools that work against today’s agentic AI threats may not be fit for tomorrow’s.

The Attribution Crisis

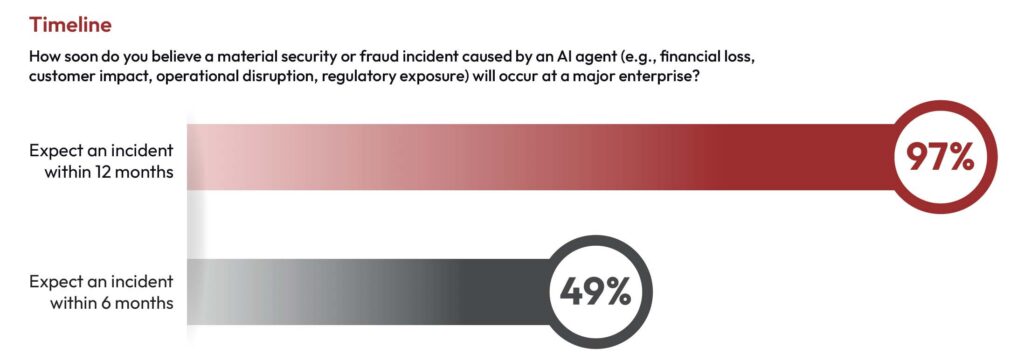

Detection is only the first step. When an incident occurs, organizations must also determine what happened, which systems were involved, and whether the initiating actor was human, automated or adversarial.

Only 26% of enterprise leaders are very confident they could definitively prove that an AI agent caused a security or fraud incident. As one Director of Security Engineering put it: “Movement between interconnected systems can resemble legitimate operational behavior.”

That’s not a technology problem. It’s a visibility and forensics problem, and it has direct implications for regulatory accountability and incident response.

The Governance Vacuum

Fifty-seven percent of organizations have no formal governance controls for AI agents today. Yet 88% expect to have defined or mature frameworks within three years. That three-year window — between where most organizations are now and where they expect to be — is exactly the period of maximum exposure. Attackers don’t wait for governance frameworks to mature.

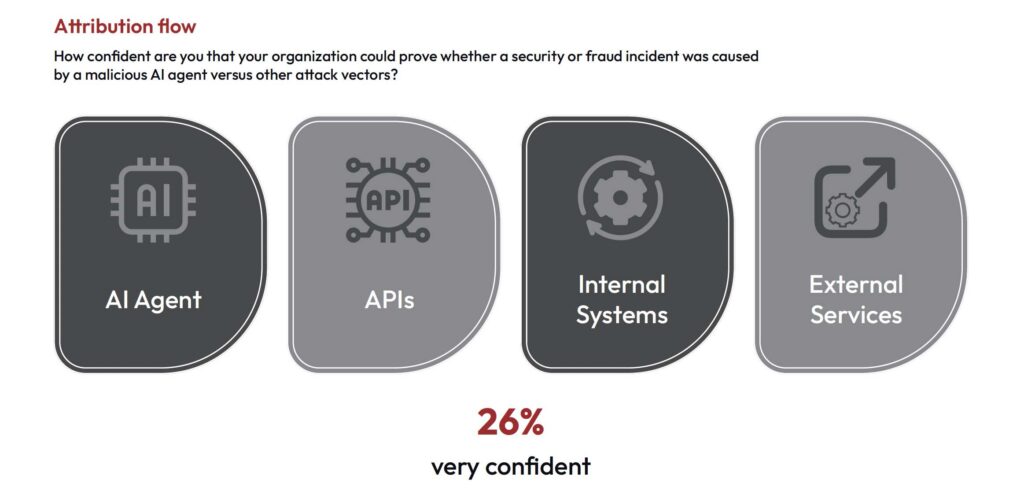

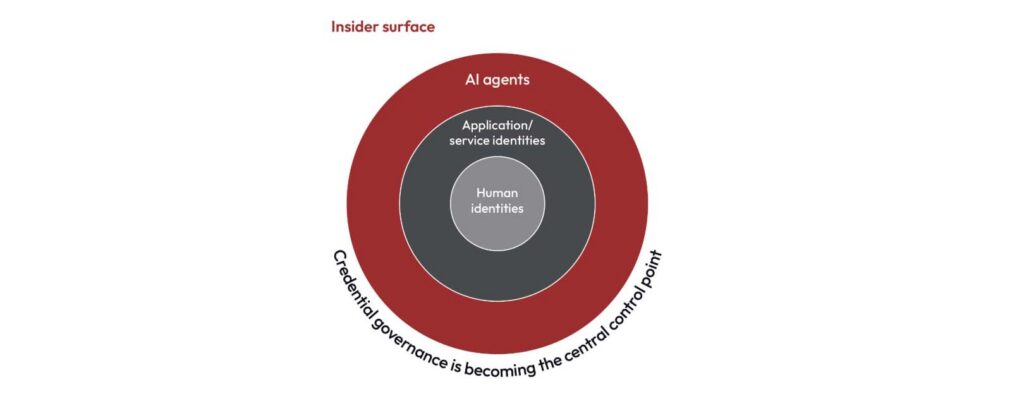

The New Face of Insider Risk

Perhaps the most striking finding in the report concerns where the risk is coming from. Eighty-seven percent of enterprise leaders agree that AI agents operating with legitimate credentials pose a greater insider threat risk than human employees.

This reframes the problem fundamentally. Traditional security models were built around the assumption that insider threats come from people such as employees, contractors and bad actors using compromised accounts. AI agents now operate inside enterprise environments through service accounts, API tokens and application identities that often carry significant privileges. This is the ultimate insider threat. Their activity can closely resemble legitimate system behavior, making malicious automation harder to isolate and attribute.

As one EVP of AML, Sanctions & Fraud observed: “Stealthy increases in access rights undermine preventive controls.”

The insider threat of 2026 doesn’t need badge access. It already has API credentials.

What Prepared Organizations Are Doing Now

The following priorities can help enterprises improve readiness as agentic AI adoption accelerates.

Integrate Security Leadership Into AI Deployment

Agentic AI initiatives often originate within engineering and product teams, with security becoming involved later — limiting visibility into how automated systems interact with enterprise infrastructure once embedded in operational workflows. Organizations closing the readiness gap are integrating security leadership earlier in AI deployment planning, so that identity controls, monitoring standards and investigative capabilities are established alongside system development rather than retrofitted after the fact.

Treat Non-Human Identities as First-Class Security Entities

AI agents operate through service accounts, API credentials and application identities that often hold significant privileges — collectively known as non-human identities (NHIs). As automation expands, these machine identities increasingly interact with sensitive systems and data — and they are growing faster than human identity counts in most enterprise environments. Organizations should establish clear visibility into all non-human identities and ensure automated systems operate within defined access boundaries. Managing them with the same rigor as human accounts is no longer optional.

Establish Visibility Into Automated Decision Chains

Security teams cannot investigate what they cannot observe. Key capabilities include tracking which systems invoke specific credentials, monitoring automated interactions across APIs and services, and identifying unusual patterns in automated workflows. Building this telemetry and logging infrastructure is the foundational requirement for both detection and response — and for distinguishing legitimate automation from potentially malicious activity.

Strengthen Attribution Capabilities

As AI agents participate in multi-step workflows across systems and services, incident investigations grow significantly more complex. The ability to reconstruct what an autonomous system did — across which APIs, through which credentials, to which outcomes — will determine whether an organization can respond effectively and demonstrate accountability to regulators.

The Window Is Open Now

Autonomous systems are expanding across enterprise environments faster than most organizations can operationalize oversight. The data in this report makes clear that enterprise leaders understand the risk, recognize its urgency, and are watching their governance and tooling fall short.

The organizations that will navigate this era effectively are not waiting for formal regulatory frameworks to tell them what to do. They are building the visibility, attribution, and classification capabilities now — because in the era of agentic AI, resilience depends on operational readiness, not policy intent.

For organizations asking how to govern AI agents in 2026, the 2026 Agentic AI Security Report delivers the answers — regional breakdowns, function-level findings across security, fraud, identity and AI leaders, and a complete action guide for building identity-first agentic AI resilience.

The 2026 Agentic AI Security Report is based on a global survey of 300 enterprise leaders conducted in February 2026. Respondents represent organizations across North America, Europe and Asia-Pacific, spanning financial services, banking, technology, telecommunications, retail and e-commerce, healthcare, manufacturing and digital services. Results are statistically valid at a 95% confidence level with a ±5.6% margin of error.

*** This is a Security Bloggers Network syndicated blog from Arkose Labs authored by Mandeep Khera. Read the original post at: https://www.arkoselabs.com/blog/agentic-ai-security-risk-enterprise-readiness/

如有侵权请联系:admin#unsafe.sh