嗯,用户让我帮忙总结一篇文章的内容,控制在一百个字以内,而且不需要用“文章内容总结”之类的开头。首先,我需要理解文章的主要观点。 文章提到Bitter Lesson Engineering(BLE),这是作者在AI工程中使用的新概念,灵感来自Richard Sutton的论文。论文的核心观点是人类试图控制或增强AI的努力可能适得其反,因为AI的智能提升主要依赖于计算能力的提升,而不是人类的方法。作者建议不要将人类的指导强加给AI,而是专注于明确目标,让AI自己找出实现目标的方法。 接下来,我需要将这些要点浓缩到100字以内。要抓住关键点:BLE的概念、Sutton的观点、避免人类干预、明确目标和让AI自主解决问题。 可能的结构是:介绍BLE及其来源,说明Sutton的观点,指出人类干预的负面影响,然后给出解决方案:明确目标和让AI自主执行。 检查一下字数是否符合要求。确保语言简洁明了,不使用复杂的术语。 </think> 文章提出Bitter Lesson Engineering(BLE)概念,强调在构建AI系统时应避免过度干预。Richard Sutton的观点指出,人类对AI的控制和增强可能适得其反,因为计算能力的提升才是AI发展的关键。作者建议专注于明确目标,并赋予AI自主解决问题的能力。 2026-2-23 01:58:0 Author: danielmiessler.com(查看原文) 阅读量:11 收藏

We need to avoid the Bitter Lesson mistake when building AI systems

February 22, 2026

I have a new concept I'm using everywhere in my AI engineering called Bitter Lesson Engineering (BLE).

The idea comes from Richard Sutton's essay, "The Bitter Lesson".

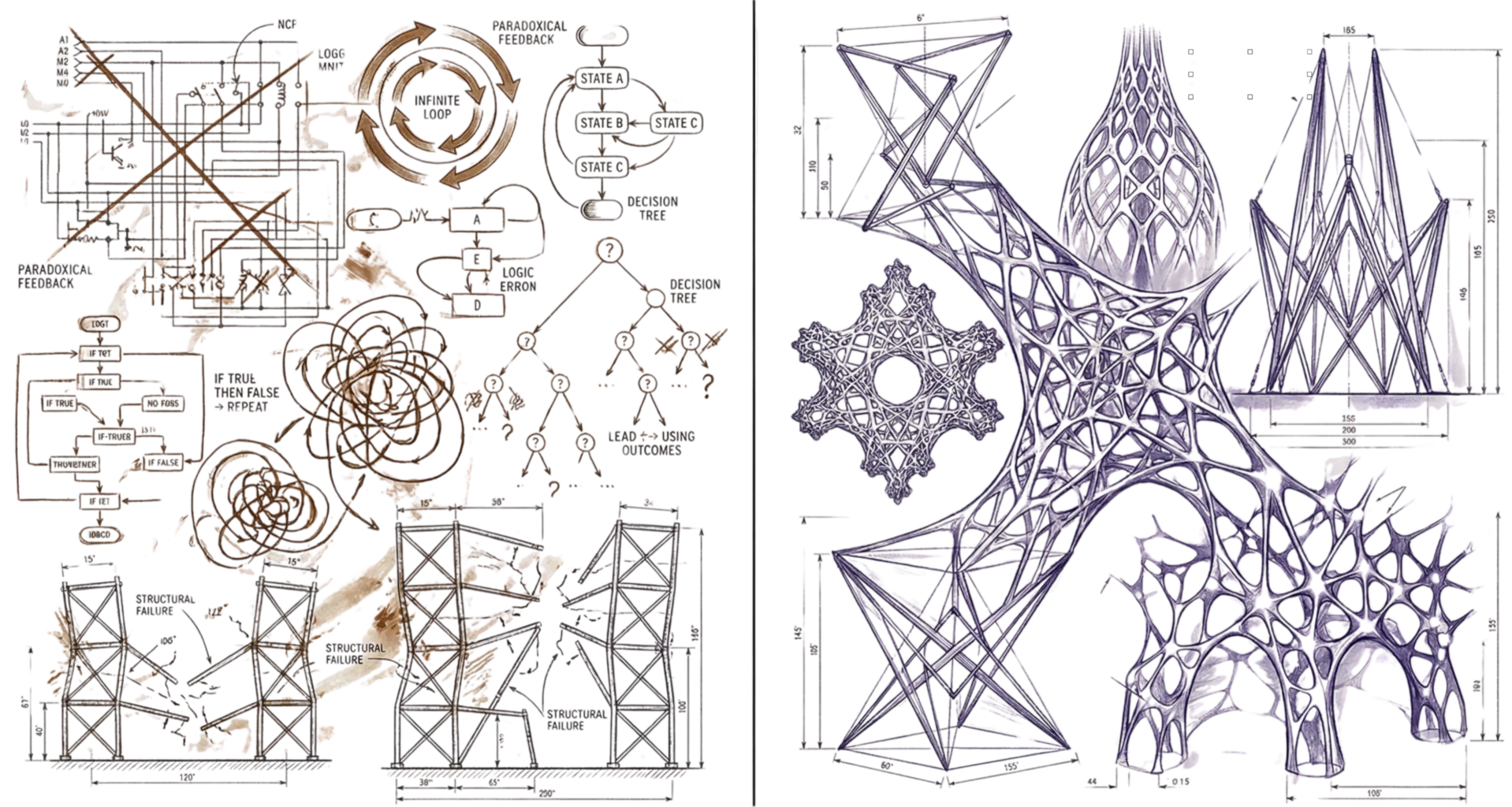

The essay argues that all of our human attempts to control, modify, and enhance AI are kind of not worth it, because when you increase the intelligence of AI—through more hardware or better algorithms or whatever—that will increase intelligence far more than anything we can do with our human approaches.

It's stronger than that actually. Not only will it not be better if we try to help, but it will likely be far worse.

Essentially, we should avoid poisoning AI's native capabilities with our supposedly superior guidance, because it's not actually superior.

Some other quotes from the essay:

"The biggest lesson that can be read from 70 years of AI research is that general methods that leverage computation are ultimately the most effective, and by a large margin."

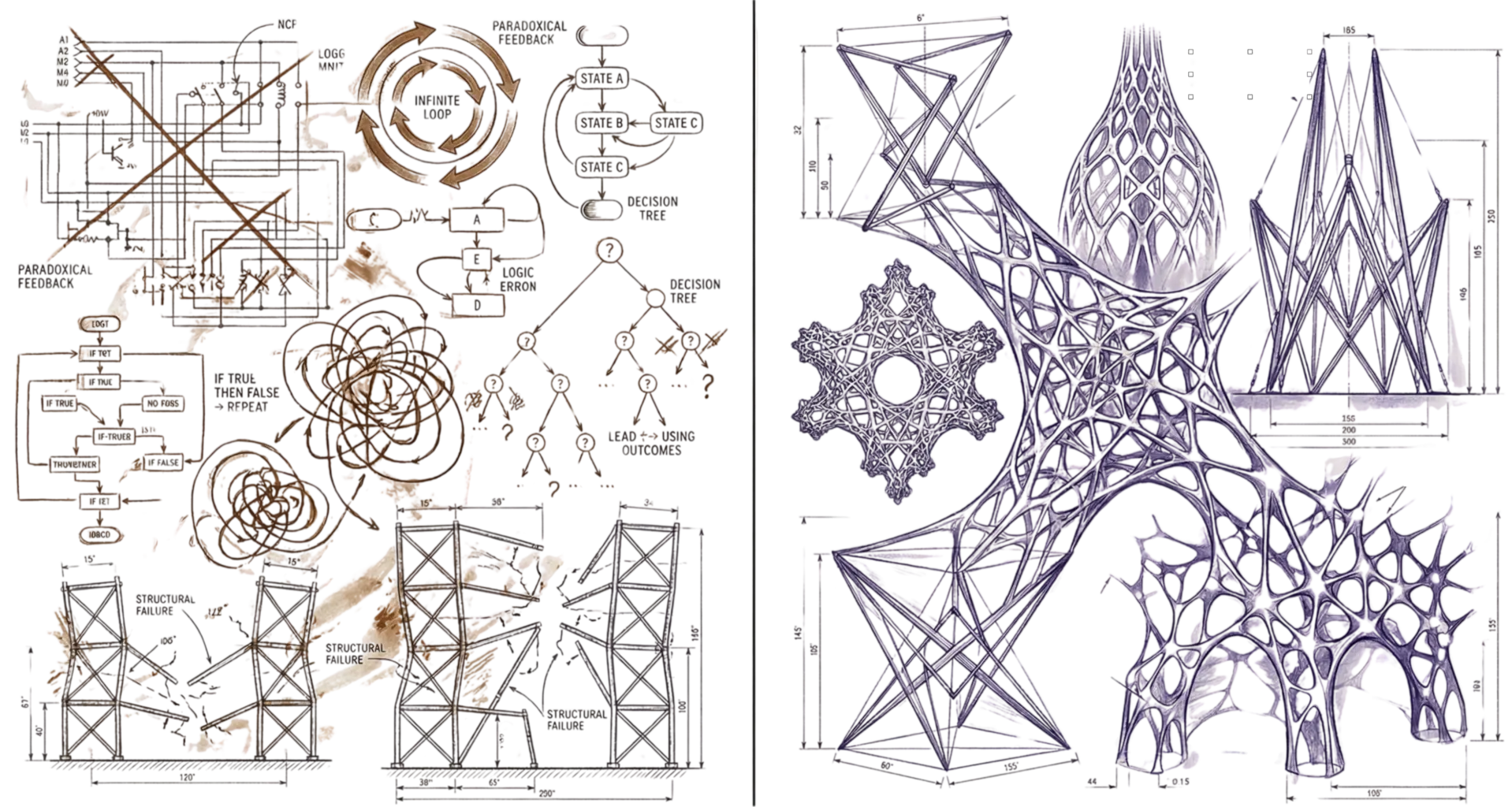

"We want AI agents that can discover like we can, not which contain what we have discovered."

"We should build in only the meta-methods that can find and capture this arbitrary complexity."

"Building in our discoveries only makes it harder to see how the discovering process can be done."

My takeaways:

- The way we think about logic and intelligence and efficiency are very likely primitive

- So we shouldn't be hard-coding those rules or ideas into how we "teach" AI to do things

- As AI gets smarter it'll come up with way better ways to do the same thing from first-principles

So my simple BLE rule for myself when building AI systems is:

Don't confuse the "what" with the "how".

Be extremely specific about what you want, and then give the best tools you have to the best AI you have, and let it figure out how to execute.

This means as the AI gets smarter, our scaffolding becomes more about preferences than execution, ultimately making our entire system meta-upgradeable instead of BLE-hobbled.

如有侵权请联系:admin#unsafe.sh