好的,我现在需要帮助用户总结这篇文章的内容,控制在100字以内,并且不需要特定的开头。首先,我得通读整篇文章,理解其主要观点。 文章主要讨论了Kubernetes中的一个安全漏洞,具体是nodes/proxy GET权限如何被滥用导致远程代码执行(RCE)。攻击者可以利用此权限通过WebSocket连接到kubelet的/Exec端点,从而在任何Pod中执行任意命令。这种情况非常隐蔽,因为审计日志中不会显示pod/exec操作。 接下来,文章提到Kubernetes官方认为这是“按预期工作”,不会修复,而是建议使用KEP-2862来改进权限管理。然而,这个改进尚未发布,并且不解决WebSocket的问题。 此外,文章还提供了缓解建议,包括审计和移除不必要的nodes/proxy GET权限、限制kubelet的网络访问以及持续进行安全测试。 最后,文章介绍了NodeZero平台如何检测和利用这个漏洞,并建议用户进行Kubernetes渗透测试以发现潜在风险。 总结时需要涵盖关键点:漏洞名称、影响范围、攻击方式、官方态度、缓解措施以及检测工具。确保在100字以内简洁明了地表达这些内容。 </think> Kubernetes的nodes/proxy GET权限被滥用可导致远程代码执行(RCE),攻击者通过WebSocket连接kubelet的/Exec端点,在任意Pod中执行命令。该漏洞被官方视为“按预期工作”,无法直接修复。建议审计并移除不必要的nodes/proxy GET权限、限制kubelet网络访问,并使用工具如NodeZero进行检测和缓解。 2026-2-27 15:59:42 Author: horizon3.ai(查看原文) 阅读量:16 收藏

Written by Daniel Limanowski and Adam Brown, Senior Attack Engineers at Horizon3.ai.

Kubernetes was supposed to make running containers at scale boring. Instead, it turned “just give the monitoring agent read-only access” into a stealthy path to execute code in every pod on a node, and in many clusters, that effectively means “own everything.”

In this post we’ll walk through the Kubernetes (K8s) nodes/proxy GET remote code execution (RCE) technique originally detailed by security researcher Graham Helton, explain why K8s maintainers have effectively classified it as “working as intended,” and show how we’ve built full coverage for it into the NodeZero® Kubernetes Pentest so you can see exactly which service accounts in your clusters can be turned into shells.

We’ll close with concrete remediation guidance, including how to use KEP‑2862 and vendor RBAC updates to start digging out of this hole even though the underlying behavior is here to stay.

Background: What is Kubernetes?

Kubernetes is an orchestration layer for containers. It schedules pods onto nodes, keeps them running, and exposes APIs for managing workloads, networking, and storage.

Each node runs a kubelet process that actually talks to the container runtime. The kubelet exposes its own HTTP(S) API for metrics, logs, and control operations like /exec and /run. Access to that API is gated by Kubernetes Role-Based Access Control (RBAC), usually via higher‑level resources like nodes/proxy that sit in front of the kubelet.

In theory, you give “read‑only” service accounts just enough access to pull metrics and logs. In practice, for nodes/proxy, “read‑only” is doing a lot of work.

The Vulnerability: nodes/proxy GET to RCE on Any Pod

Research shows that a service account with the nodes/proxy GET permission and network access to the kubelet API can execute arbitrary commands in any pod on reachable nodes (including privileged system pods and control plane workloads) by abusing how the kubelet authorizes WebSocket connections to /exec.

Cluster admins and vendors commonly grant nodes/proxy GET so that agents can:

- scrape node metrics,

- read resource usage summaries,

- and stream container logs.

These interactions look and feel like classic read-only operations. Vendors ship the permissions in Helm charts by default: public code repository scans turned up at least 69 widely used charts that provision nodes/proxy permissions, including stacks like Prometheus, Grafana, Datadog, and Elastic.

That’s rather unsurprising as “read” roles are everywhere, especially with monitoring tools. The problem is what happens when you combine nodes/proxy GET with WebSockets and the kubelet’s /exec endpoint.

How Read-Only Becomes RCE

At a high level, the attack looks like this:

- Start from a pod running under a service account that has a binding to the

nodes/proxy GETpermission. - Ensure the pod can reach the kubelet API on

https://$NODE_IP:10250. - Enumerate pods on that node using

/pods. - Open a WebSocket connection to

/execon the kubelet and bypass the expectedCREATEverb check. - Run arbitrary commands inside any target container.

The key bug is how the kubelet translates HTTP/WebSocket semantics into RBAC verbs for authorization.

Two paths to the same kubelet

The nodes/proxy resource is unusual in Kubernetes RBAC. Instead of mapping to a single operation, it’s a catch‑all gate in front of the kubelet API, with two main paths:

- API server proxy path (traditionally seen, widely used)

https://$APISERVER/api/v1/nodes/$NODE_NAME/proxy/...- Requests traverse the API server, get logged by AuditPolicy (including full pods/exec URIs), and are authorized the “normal” way.

- Direct kubelet API path

https://$NODE_IP:10250/...- Requests go straight to the kubelet, do NOT generate

pods/execaudit logs (onlysubjectaccessreviews), and rely on the kubelet’s own authz mapping.

WebSockets, GET, and the missing second check

For traditional HTTP requests, both the API server and kubelet follow the documented mapping of HTTP methods to RBAC verbs: POST → create, GET → get, etc.

Command execution in pods is supposed to require CREATE on pods/exec (or, in this case, nodes/proxy). A POST to /exec via the API server proxy path is correctly mapped to CREATE and denied if the caller only has GET:

curl -sk -X POST \

-H "Authorization: Bearer $TOKEN" \

"$APISERVER/api/v1/nodes/$NODE_NAME/proxy/exec/default/nginx/nginx?command=id&stdout=true"

# => 403 Forbidden: cannot create resource "nodes/proxy"But interactive exec over WebSockets works differently:

- WebSockets require an initial HTTP GET request with

Connection: Upgradeheaders to establish the tunnel, even though the underlying operation is a write (command execution). - The kubelet looks at that GET, maps it to RBAC verb

get, and maps the path/exec/to the proxy subresource, yielding an authz record of “can this user getnodes/proxy?”. - If the caller has

nodes/proxy GET, the authorization check passes. - The kubelet upgrades the connection to WebSockets, hands it to the exec handler, and never performs a second check for CREATE.

At that point, the attacker can execute any command over the WebSocket in the target container:

websocat \

--insecure \

--header "Authorization: Bearer $TOKEN" \

--protocol "v4.channel.k8s.io" \

"wss://$NODE_IP:10250/exec/default/nginx/nginx?output=1&error=1&command=id"

# uid=0(root) gid=0(wheel) groups=0(wheel)...

# {"metadata":{},"status":"Success"}Why this matters in your clusters

nodes/proxy GETis widely granted to monitoring, logging, and observability agents: Prometheus, Datadog, Grafana, OpenTelemetry, New Relic, Wiz, and many others.- Those agents typically run in privileged or system namespaces, often on every node.

- If any of those service accounts can reach port

10250and hasnodes/proxy GET, they can execute code in any pod on that node, including control plane components and privileged system pods likekube-proxy, leading to full cluster compromise. - Direct kubelet

/execoperations do NOT show up aspods/execin audit logs; you only see thesubjectaccessreviewschecks, which makes this significantly stealthier than the API-server proxy path.

This is the kind of “read‑only” permission that attackers quietly celebrate and infrastructure teams inherit for years to come.

“Won’t Fix” and KEP‑2862: The Upstream Story

Researcher Graham Helton reported this issue through the Kubernetes HackerOne program. The Kubernetes Security Team triaged it, discussed it with SIG‑Auth and SIG‑Node, and ultimately closed it as:

“Won’t Fix (Working as Intended)” and decided it would not receive a CVE.

Their reasoning:

- Fixing just this path would require brittle “double authorization” logic across both kubelet and apiserver, which they consider architecturally incorrect.

- They instead point to KEP‑2862: Fine‑Grained Kubelet API Authorization as the long‑term architectural answer.

What KEP‑2862 actually does

KEP‑2862 introduces new, finer‑grained kubelet subresources like:

| Permission | Endpoints (examples) | Use case |

|---|---|---|

| nodes/metrics | /metrics, /metrics/cadvisor, /metrics/… | Metrics collection |

| nodes/stats | /stats, /stats/summary | Resource statistics |

| nodes/log | /logs/ | Node logs |

| nodes/healthz | /healthz, /healthz/ping, /healthz/syncloop | Health checks |

| nodes/pods | /pods, /runningpods | Pod listing/status |

The goal is to give monitoring agents an alternative to the coarse‑grained nodes/proxy permission, so that over time, nodes/proxy can be deprecated for those use cases.

But there are three important caveats:

- Not in General Availability (GA) yet. At the time of this blog post, KEP‑2862 is expected in the Kubernetes v1.36 release slated for April 2026.

- No fine‑grained equivalents for

/exec,/run,/attach, or/portforward. Any workload that legitimately needs those still has to usenodes/proxy. - It does not change the WebSocket verb‑mapping behavior. The mismatch between “WebSockets require GET” and “command execution should require CREATE” for

nodes/proxy GETremains.

The Kubernetes Security Team is candid about this, however: they see KEP‑2862 as the way to render nodes/proxy obsolete for monitoring agents, not as a fix for the underlying authz behavior.

In summary, nodes/proxy GET with kubelet access is going to be a dangerous combination for the foreseeable future. The only realistic mitigation path is to restrict usage of nodes/proxy for read‑only use cases.

What It Takes to Exploit nodes/proxy GET in Your Cluster

From an attacker’s perspective, the bar is low. To exploit this behavior, they need:

- A credential with

nodes/proxy GET

A minimal vulnerable Role/ClusterRole looks like:

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: nodes-proxy-reader

rules:

- apiGroups: [""]

resources: ["nodes/proxy"]

verbs: ["get"]Any service account bound to this role is in scope.

- Network access to the kubelet API on each target node

- Typically

https://$NODE_IP:10250 - If nodes are treated as a security boundary or the cluster is multi‑tenant, this is far more serious.

- A foothold in any pod using that service account

A Kubeconfig committed to Git, SSRF into a pod, or command injection in an app container can turn into an attacker running commands as the service account. From there, they:

- Query

/podson the kubelet to enumerate all pods and containers on the node. - Use WebSockets to query

/exec/$NAMESPACE/$POD/$CONTAINER?...&command=...and run arbitrary commands across pods.

nodes/proxy GET autonomously.NodeZero Coverage: Turning nodes/proxy GET into a First-Class Weakness

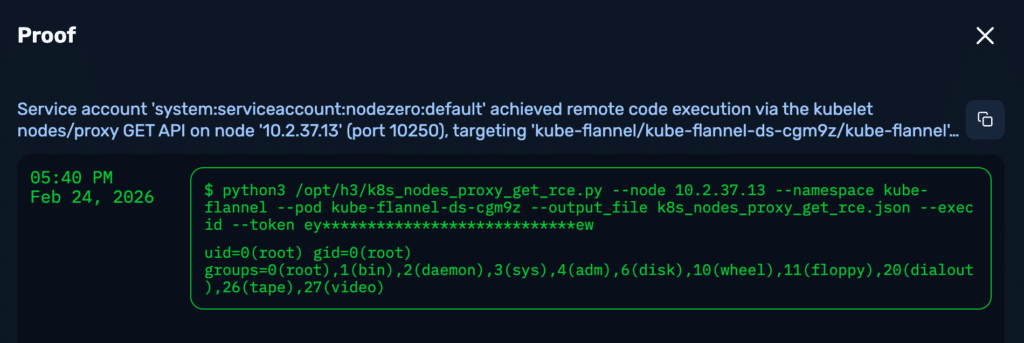

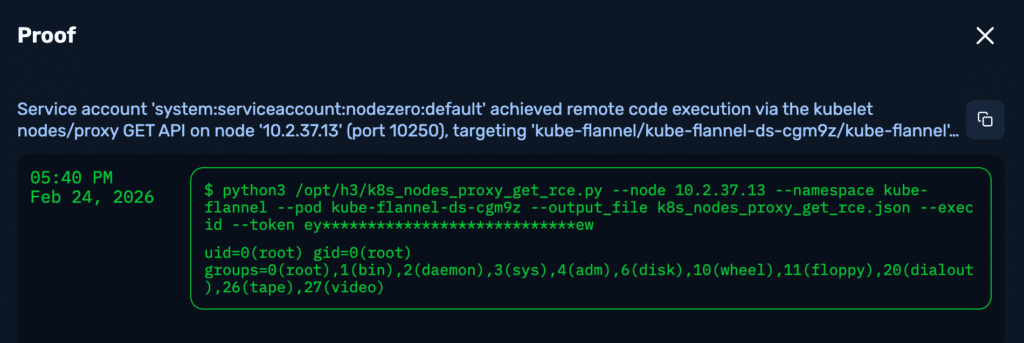

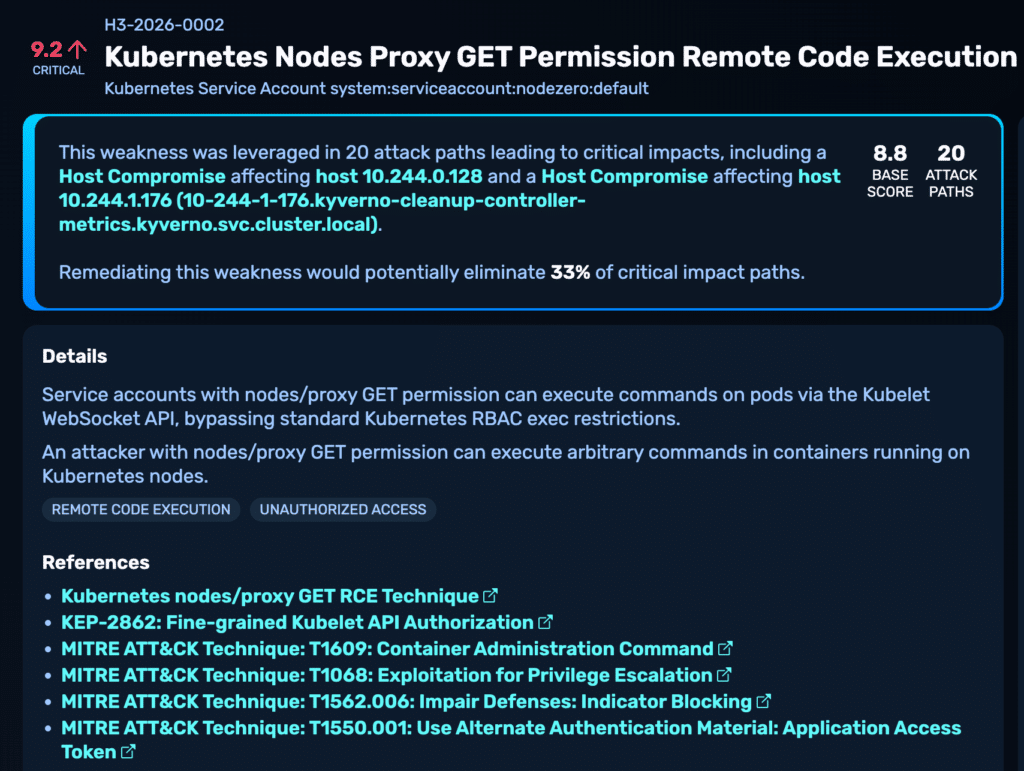

We are introducing this vulnerability as a new first‑class weakness in NodeZero’s Kubernetes Pentest operation, tracked as H3‑2026‑0002.

At a high level, this allows NodeZero to automatically find, safely exploit, and report this weakness if it appears in your cluster.

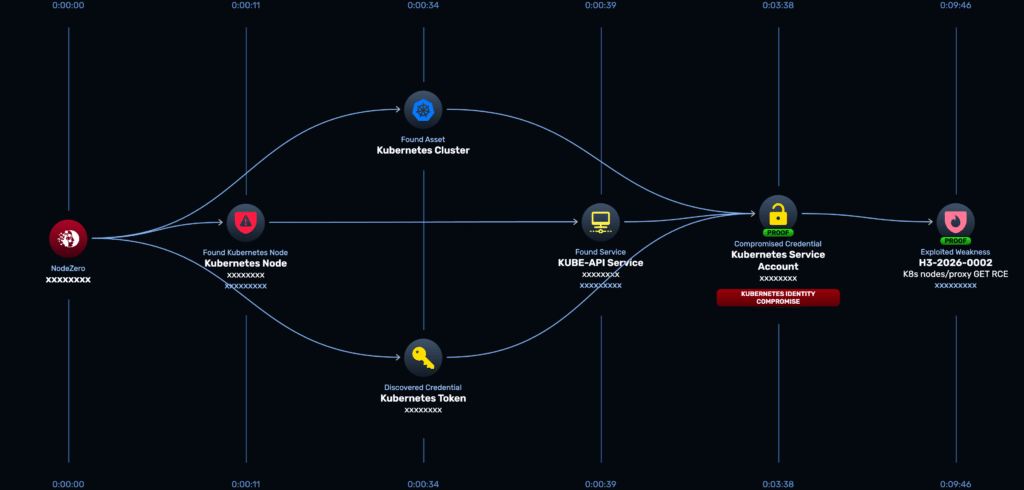

nodes/proxy GET exploitability leveraging credentials it finds in the pentest environment.Where This Fits in the NodeZero Kubernetes Pentest

From a user’s perspective, this behavior is now just part of a normal Kubernetes Pentest:

- You deploy a NodeZero Operator and Kubernetes Runner once per cluster.

- You run Kubernetes Pentests from the portal, CLI, or API, selecting:

- scope (cluster IP ranges)

- attack configuration

- optional runner

- Kubernetes settings (namespace & service account).

- NodeZero deploys into your cluster, enumerates nodes, pods, and service accounts, and runs a battery of K8s‑specific checks, including this nodes/proxy GET RCE.

Choosing the right footholds: namespace and service account

Our Kubernetes documentation already recommends that you use the pentest to simulate realistic footholds, not just a privileged admin account:

Under Kubernetes Settings for a Kubernetes Pentest, you can specify a namespace and a service account.

Example scenarios:

- pick a system namespace + monitoring service account to simulate a compromised agent (for example, the account used by Datadog or Prometheus), or

- pick a front‑door application namespace (public‑facing workloads) to simulate “attacker popped a pod via the app.”

In both scenarios, the new nodes/proxy GET RCE module gives you a clear answer to:

“If an attacker compromises this pod or service account, how far can they push kubelet?”

Enumerating and abusing service account tokens

Beyond the initial foothold identity, our Kubernetes pentest already does quite a bit with service accounts:

- From the pod where NodeZero runs, we attempt to grab its service account token

- When NodeZero compromises additional pods or nodes, it will:

- harvest service account tokens for all pods on the node

- grab the node identity’s service account token

All of those identities flow into the graph as credentials, triggering the nodes/proxy GET RCE check to test for vulnerable permissions.

Living with a “Won’t Fix”: Remediation Recommendations

Because Kubernetes maintainers are not planning to patch this behavior directly, security teams have to treat nodes/proxy GET as a high‑risk execution capability and harden around it.

KEP-2862 won’t reach GA in April 2026 so keep an eye on it and upgrade clusters when you can. Until then, here’s a set of actions you can take today:

1. Audit and eliminate use of nodes/proxy GET

The priority is to remove the privileged nodes/proxy GET permission from any service account that doesn’t strictly need full Kubelet API access.

- Implement regular RBAC auditing to detect and flag any

ClusterRoleorRolethat grants get verbs on thenodes/proxyresource.- Principle of Least Privilege: Create dedicated service accounts for node metrics/logs and strictly limit their scope to the namespaces and nodes they truly require. Avoid using

nodes/proxyfor multi-purpose operational accounts that hold other sensitive rights.

- Principle of Least Privilege: Create dedicated service accounts for node metrics/logs and strictly limit their scope to the namespaces and nodes they truly require. Avoid using

- Shift from reactive cleanup to proactive prevention of security misconfigurations.

- Policy-as-Code (PaC): Use PaC tools like OPA Gatekeeper or Kyverno to block the creation of new RBAC rules (i.e., new

ClusterRoleorRole) that grantnodes/proxypermissions to unauthorized subjects. This prevents accidental re-introduction of the vulnerability.

2. Lock down network access to kubelet

RBAC is necessary but not sufficient. Restrict network access to the Kubelet API as a critical second line of defense.

- Restrict Port 10250: Restrict access to

https://$NODE_IP:10250using Network Policies, cloud firewalls, or other network controls. - Allow-List Only: Ensure that only the Kubernetes API server and explicitly authorized monitoring infrastructure can communicate with the Kubelet on port

10250. - Prevent Egress: Ensure pods in non-system namespaces cannot directly connect to the Kubelet, even if they somehow gain

nodes/proxy GETin the future. - Monitor Traffic: Monitor and alert on any unexpected network traffic to port

10250originating from application or non-system namespaces.

3. Run continuous, attacker‑style validation

As researcher Helton notes, this weakness feels a lot like Kerberoasting in Active Directory: architecturally “by design,” widely deployed, and abusable for years.

Even with careful RBAC and eventual KEP‑2862 adoption, new clusters, namespaces, and agents get added all the time. You need something that continuously exercises these paths the way an adversary would, not just a static policy document.

That’s exactly what we’ve built into NodeZero’s Kubernetes Pentest: a recurring, cluster‑wide, attacker‑style assessment of Kubelet and RBAC behavior, including this nodes/proxy GET RCE.

Are my clusters vulnerable?

If your Kubernetes environment:

- uses third‑party monitoring/logging/observability agents

- has service accounts with the

nodes/proxy GETpermission - allows pods to reach the kubelet on port

10250

…then you should assume this weakness is in play until you’ve proven otherwise.

With our latest update, NodeZero’s Kubernetes Pentest can automatically:

- discover nodes, pods, service accounts, and their credentials

- surface identities with

nodes/proxy GETpermission - safely exercise the WebSocket

/execpath against the kubelet - produce concrete proofs (command outputs, attack paths, and host compromise events) for each successful RCE

nodes/proxy GET weakness if found exploitable in your environment, including actionable remediation recommendations.If you’re responsible for Kubernetes security, this is one of those Trust but verify moments.

Run a NodeZero Kubernetes Pentest against your clusters, ideally from the same namespaces and service accounts your monitoring agents use, and find out which of your “read‑only” identities can actually run code across pods. Then use the remediation steps above to start turning those paths off before an attacker finds them for you.

If you’re interested in seeing a demo, you can request one here.

如有侵权请联系:admin#unsafe.sh