As an agile open source project, Kubernetes continues to evolve, as does the cloud computing landsc 2024-4-22 23:30:0 Author: securityboulevard.com(查看原文) 阅读量:2 收藏

As an agile open source project, Kubernetes continues to evolve, as does the cloud computing landscape. Keeping up with the latest versions isn’t practical for many organizations, and there are good reasons to not keep up with the very latest version, particularly in the first few weeks after a release. Nevertheless, it’s not a great idea to get too far behind, not only because you may miss out on important security, compatibility, and performance updates, but also because support for older versions ends. If you’re using Amazon Elastic Kubernetes Service (EKS), for example, standard support for 1.24 ended January 31, 2024, 1.25 ends May 1, 2024, followed by 1.26 on June 11, and 1.27 on July 24.

How to Upgrade Your EKS Clusters

EKS is a managed Kubernetes service from Amazon that many organizations use to deploy, manage, and scale containerized applications. This guide walks through the steps you’ll need to take to upgrade your EKS clusters. It includes guidance on when and how to complete these upgrades as well as tools that can make it easier for you to upgrade safely and securely.

How Often to Upgrade Kubernetes

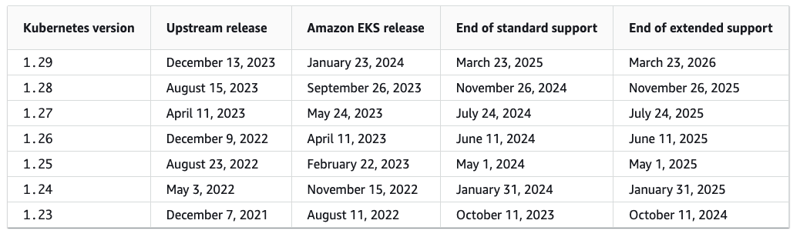

The Kubernetes community follows an N-2 support policy, which means that they provide security fixes and bug patches for the three most recent minor versions. They release new minor versions about three times a year, and minor versions are usually under standard support (for Amazon EKS) for the first 14 months after release. After that, the minor release enters extended support for the next 12 months (at an additional cost per cluster hour). Once the extended support period ends, your EKS cluster is automatically upgraded to the oldest currently supported extended version. This scenario is far from ideal as it gives you little option regarding your upgrade process and you’ll still need to upgrade to a newer release in the near future.

Here’s the schedule for the next few Kubernetes versions:

Source: Amazon EKS Kubernetes versions

For most organizations, expect to assess new Kubernetes releases regularly. Many teams manage multiple versions in different environments. For example, you may test out a new version in your development environment for at least a week or two, and follow that process for test and staging environments. Before pushing a new version to production, make sure you have at least a week of data, so you know you won’t run into unexpected snags on go-live.

Each Kubernetes version includes the control plane and the data plane; make sure that both your control plane and your data plane are running the same Kubernetes minor version. Kubernetes allows some skew between versions, but support varies by Kubernetes component and for different cluster development tools.

- Control plane — In EKS clusters, the control plane is managed by AWS. You can start upgrades to the control plane version using the AWS API.

- Data plane — For our purposes, the data plane version refers to the version of the kubelet running on your nodes. Even in the same cluster, different nodes may be running different versions. You can look up the version of all nodes using the kubectl get nodes command.

Staging EKS Upgrades

For upgrade purposes, this is the correct order for upgrades. However, we recommend that your dev/stage/test clusters all look as close to production as possible for typical day-to-day operations.

Update the Development Cluster

You’ll want to upgrade your development environment first. This ensures you are keeping up with the latest K8s updates. If you encounter critical issues with the latest version, you can identify problems quickly and find solutions before pushing the latest EKS version to staging.

Push to Staging

The next environment to upgrade is often your staging environment. This is where any remaining issues that haven’t been fixed in the development environment should be caught. This is the last step before production, so it’s often best to allow a “soak time” for changes here — we allow one to two weeks.

Prepare for Production

The goal is to keep your production version aligned as closely to staging as possible. This makes your developers’ lives easier because they don’t need to worry about maintaining code for too many versions. After the agreed upon “soak time,” there should be very little risk in upgrading the production environment, so upgrade it promptly. Don’t fall into the trap of not completing the upgrade cycle because you’re worried about moving it to production.

Note Regarding Minor Version Upgrades

Some practitioners recommend not installing the latest minor version until patch .2. In other words, they might suggest waiting to install the latest Kubernetes version, such as 1.30.0, until 1.30.2 is available. From there, you can begin the upgrade process, moving from dev to staging and then to production. This recommendation stems from years of experience — by the .2 version, extensive testing is complete and major issues have already been discovered and resolved. Often, once you have completed the dev upgrade and rolled it out to staging, the .3 release is available.

Shared Responsibility Model

EKS customers are responsible for initiating upgrades for the cluster control plane and data plane. While AWS handles control plane upgrades, you are responsible for the data plane, including Fargate pods and add-ons.

Cluster Upgrades

EKS supports in-place cluster upgrades, which preserves resource and configuration consistency. It minimizes user disruption and retains information about existing workloads and resources. You can only upgrade one minor version at a time.

If you need to make multiple version updates, you’ll have to do sequential upgrades. This approach can increase the risk of downtime. Consider evaluating a blue/green cluster upgrade strategy in this case, where one environment (blue) runs the current Kubernetes version and another environment (green) runs the new Kubernetes version.

AWS Management of EKS Upgrades

AWS manages the EKS control plane upgrade process to ensure a seamless transition from one Kubernetes version to the next. These are the steps AWS goes through to upgrade the EKS control plane:

- Pre-upgrade checks: AWS conducts pre-upgrade checks, assessing the current cluster state and evaluating the compatibility of the new version with your workloads. The upgrade process will stop if any issues are detected.

- Backup and snapshot: Next, AWS backs up your existing control plane and creates a snapshot of your etcd data store to ensure data consistency and enable you to roll back in case there is an upgrade failure.

- New control plane: AWS now creates a new control plane with your new Kubernetes version; this runs in parallel with your existing control plane.

- Compatibility testing: Next, AWS tests the new control plane compatibility with your workloads, running automated tests to verify that your applications continue to function as expected. It analyzes application health, not APIs that may be deprecated or removed. (Pluto is an open source utility that finds deprecated Kubernetes API versions in your code repositories and Helm releases.)

- Switch control plane endpoints: At this point, AWS switches the control plane endpoints (API server) to the new control plane.

- Terminate the old control plane: Once you have completed the upgrade, AWS terminates the old control plane and cleans up all resources associated with it.

Upgrade Sequence

To upgrade an EKS cluster, we recommend you go through the following steps:

- Review release notes from both Kubernetes and EKS.

- Review the compatibility for your add-ons. Upgrade your Kubernetes add-ons and custom controllers;GoNoGo is an open source tool that checks Kubernetes add-ons.

- Identify and remediate the use of deprecated and removed APIs in your workloads. Pluto can help you with this process.

- Make sure (if you use them) Managed Node Groups are on the same K8s version as the control plane. EKS managed node groups and any nodes created by EKS Fargate Profiles support only one minor version skew on the data plane and control plane.

- Back up the cluster (if desired).

- Update the control plane.

- Upgrade the cluster data plane. Upgrade your nodes so they are at the same Kubernetes minor version as your upgraded cluster.

- Update kubectl.

Create an EKS Upgrade Checklist

EKS Kubernetes version documentation provides a detailed list of changes for each version, which you should use to build a checklist for each upgrade. For guidance on specific EKS version upgrades, check the documentation to identify important changes and considerations for each version.

Upgrade Critical Add-ons and Components

Before you begin a cluster upgrade, make sure you understand what versions of Kubernetes components are in use. Inventory your cluster components, particularly the ones that interact with the Kubernetes API directly. Your typical cluster includes multiple workloads that rely on the Kubernetes API, which provide important functionalities. These cluster components typically include:

- Cluster autoscalers

- Container network interfaces

- Container storage drivers

- Continuous delivery systems

- Ingress controllers

- Monitoring and logging agents

Make sure you check for any other workloads or add-ons that interact directly with the Kubernetes API. You can sometimes identify critical cluster components by looking at namespaces that end in *-system. Next, refer to the documentation of those critical components to evaluate version compatibility and whether there are any prerequisites for upgrading. Some components may require you to make updates or adjust your configuration before you upgrade your cluster.

Here are some common add-ons (linked to upgrade documentation):

- Amazon VPC CNI plugin for Kubernetes self-managed add-on. You can only upgrade one minor version at a time if you installed it as an Amazon EKS Add-on.

- Kubernetes kube-proxy self-managed add-on.

- CoreDNS self-managed add-on.

- AWS Load Balancer Controller. The AWS Load Balancer Controller must be compatible with the EKS version you deploy.

- Amazon Elastic Block Store (Amazon EBS) Container Storage Interface (CSI) driver.

- Amazon Elastic File System (Amazon EFS) CSI driver.

- Kubernetes Metrics Server.

- Kubernetes Cluster Autoscaler The Cluster Autoscaler is coupled tightly with the Kubernetes scheduler and always needs to be upgraded when you upgrade the cluster. Find the address of the latest release that corresponds to your Kubernetes minor version.

- Karpenter.

- Service mesh, such as Linkerd2 or Istio.

Some add-ons, such as the VPC CNI plugin and kube-proxy, can be installed via Amazon EKS Add-ons, which provides an alternative to add-on management through the EKS API. You might consider managing those addons this way, as this approach enables you to update add-on versions with a single command. For example:

aws eks update-addon —cluster-name my-cluster —addon-name vpc-cni —addon-version version-number \

--service-account-role-arn arn:aws:iam::111122223333:role/role-name —configuration-values '{}' —resolve-conflicts PRESERVE

To check whether you have any EKS Add-ons, type:

aws eks list-addons --cluster-name <cluster name> --output tableYou’ll see output that looks similar to this:— — — — — — — — —

| ListAddons |

+----------------+

|| addons ||

|+--------------+|

|| coredns ||

|| kube-proxy ||

|| vpc-cni ||

|+--------------+|Note: Amazon does not automatically upgrade EKS Add-ons during a control plane upgrade. You must initiate EKS add-on updates and select the version you want to update to. Make sure you pick a compatible version from all available versions using this guidance on add-on version compatibility. Remember, you can only upgrade Amazon EKS Add-ons one minor version at a time.

Verify EKS Requirements

AWS requires several specific resources in your account to upgrade a control plane, including:

- IP addresses: Amazon EKS requires that up to five IP addresses are available from the subnets you specified when you created your cluster.

Make sure your subnets have enough IP addresses to upgrade the cluster:

CLUSTER=<cluster name>

aws ec2 describe-subnets --subnet-ids \

$(aws eks describe-cluster --name ${CLUSTER} \

--query 'cluster.resourcesVpcConfig.subnetIds' \

--output text) \

--query 'Subnets[*].[SubnetId,AvailabilityZone,AvailableIpAddressCount]' \

--output table

----------------------------------------------------| DescribeSubnets |

+---------------------------+--------------+-------+

| subnet-0ce25bacdb030ce4f | us-west-2a | 8136 |

| subnet-0c173097d592e96e4 | us-west-2c | 8051 |

| subnet-06a36d93ad471d420 | us-west-2b | 8127 |

+---------------------------+--------------+-------+

(You can use the VPC CNI Metrics Helper to create a CloudWatch dashboard for virtual private cloud (VPC) metrics.)

- EKS IAM: The control plane identity access management (IAM) role must be in the account with the necessary permissions.

- EKS security group: The control plane primary security group must be available in the account with the required access rules.

- Cluster IAM role permissions: If you have secret encryption enabled in your cluster, make sure the cluster IAM role has permission to use the AWS Key Management Service (AWS KMS) key.

Open Source Tools for EKS Upgrades

The cloud native ecosystem continues to expand and mature, so it’s unsurprising that there are a lot of open source tools available to help teams navigate Kubernetes upgrades. Here are a few options you can use to help you with your EKS upgrades, with some examples and descriptions.

Pluto

Pluto is an open source tool from Fairwinds that looks for the use of deprecated apiVersions. Pluto supports scanning a live cluster, manifest files, and helm charts. It also provides a GitHub Action that you can include in your CI process. Pluto will tell you whether you can upgrade safely against API paths, checking to see whether you are calling deprecated or removed API paths in your configuration or Helm charts. You can run Pluto against local files using the command:

pluto detect-files

You can also check Helm using the command:

pluto detect-helm -owide

It’s pretty easy to add this to CI; this is helpful for people who manage many clusters.

helm and API resources (in-cluster)

$ pluto detect-all-in-cluster -o wide 2>/dev/null

NAME NAMESPACE KIND VERSION REPLACEMENT DEPRECATED DEPRECATED IN REMOVED REMOVED IN

testing/viahelm viahelm Ingress networking.k8s.io/v1beta1 networking.k8s.io/v1 true v1.19.0 true v1.22.0

webapp default Ingress networking.k8s.io/v1beta1 networking.k8s.io/v1 true v1.19.0 true v1.22.0

eks.privileged PodSecurityPolicy policy/v1beta1 true v1.21.0 false v1.25.0

This combines all available in-cluster detections, showing results from Helm releases and API resources.

NAME KIND VERSION REPLACEMENT REMOVED DEPRECATED REPL AVAIc

eks.privileged PodSecurityPolicy policy/v1beta1 false true trueOnce you identify which workloads and manifests need updating, you may find that you need to change the resource version in your manifest files (for example, change networking.k8s.io/v1beta1 to networking.k8s.io/v1). This may require you to update the resource specification as well. You may need to do additional research, depending on which resource you are replacing.

If a resource type is remaining the same and only the API version needs to be updated, you can use the kubectl-convert command to convert your manifest files automatically. For example, if you want to convert an older Deployment to apps/v1, type the command:

kubectl-convert -f <file> --output-version <group>/<version>Refer to install kubectl convert plugin on the Kubernetes website if you would like more information.

Nova

Nova is another open source utility from Fairwinds that helps you check your Helm releases to see if there are upgrades needed. Typically, the CNI and other dependencies are installed with Helm. Nova is a fast method you can use to ensure you are running the latest version. As always, check the patch notes to verify support for the version you are targeting.

Install the golang binary and run it against your cluster.

$ go install github.com/fairwindsops/nova@latest

$ nova find

Release Name Installed Latest Old Deprecated

============ ========= ====== === ==========

cert-manager v0.11.0 v0.15.2 true false

insights-agent 0.21.0 0.21.1 true false

grafana 2.1.3 3.1.1 true false

metrics-server 2.8.8 2.11.1 true false

nginx-ingress 1.25.0 1.40.3 true false

To check for outdated container images instead of helm releases:

$ nova find --container

Container Name Current Version Old Latest Latest Minor Latest Patch

============== =============== === ====== ============= =============

k8s.gcr.io/coredns/coredns v1.8.0 true v1.8.6 v1.8.6 v1.8.6

k8s.gcr.io/etcd 3.4.13-0 true 3.5.3-0 3.4.13-0 3.4.13-0

k8s.gcr.io/kube-apiserver v1.21.1 true v1.23.6 v1.23.6 v1.21.12

k8s.gcr.io/kube-controller-manager v1.21.1 true v1.23.6 v1.23.6 v1.21.12

k8s.gcr.io/kube-proxy v1.21.1 true v1.23.6 v1.23.6 v1.21.12

k8s.gcr.io/kube-scheduler v1.21.1 true v1.23.6 v1.23.6 v1.21.12kubepug

Officially called KubePug/Deprecations, this open source tool is designed to help users evaluate the health and performance of their K8s clusters. It functions as a kubectl plugin and includes these capabilities:

- Downloads a generated data.json file that contains API deprecation information for a specified release of Kubernetes.

- Scans a running Kubernetes cluster, determining whether any objects will be affected by depreciation.

- Displays affected objects to the user.

Features

- Runs against a Kubernetes cluster using

kubeconfigor the active cluster. - Can be executed against a distinct set of manifests or files.

- Allows you to specify the target Kubernetes version for validation.

- Delivers information on the replacement API that you should adopt.

- Includes detailed information about the version in which the API was deprecated or removed, based on the target cluster version.

Run the following command to install kubepug as a Krew plugin:

kubectl krew install deprecations

eksup

eksup is a command line interface (CLI) that is designed to provide users with comprehensive information and tools to prepare clusters for an upgrade. It can help streamline the upgrade process by providing relevant insights and actions.

A CLI to aid in upgrading Amazon EKS clusters

Usage: eksup <COMMAND>

Commands:

analyze Analyze an Amazon EKS cluster for potential upgrade issues

create Create artifacts using the analysis data

help Print this message or the help of the given subcommand(s)

Options:

-h, --help Print help

-V, --version Print version

Functions

- Analyze Clusters: Use eksup to assess your clusters against the next Kubernetes version to identify issues that could impact the upgrade process.

- Generate Playbooks: Generate custom playbooks outlining the upgrade steps based on your cluster’s analysis results, including the necessary actions and remediations.

- Edit Playbooks: The playbooks generated are editable, enabling you to adapt the upgrade steps so they align with your cluster configurations and business needs. You can also document insights you gained during the upgrade process.

- Increase Collaboration: Upgrades are frequently initiated on non-production clusters first, so you can capture any additional steps or insights you discover during this phase and use them to improve the upgrade process for production clusters.

- Preserve Historical Artifacts: You can preserve your playbooks as historical references. This helps you make sure each upgrade cycle leverages previous learnings, improving your efficiency in future upgrades.

GoNoGo

GoNoGo is another open source tool from Fairwinds. It helps you define and discover whether an add-on installed with Helm is safe to upgrade.

gonogo --help

The Kubernetes Add-On Upgrade Validation Bundle is a spec that can be used to define and then discover if an add-on upgrade is safe to perform.

Usage:

gonogo [flags]

gonogo [command]

Available Commands:

check Check for Helm releases that can be updated

completion Generate the autocompletion script for the specified shell

help Help about any command

version Prints the current version of the tool.

Flags:

-h, --help help for gonogo

-v, --v Level number for the log level verbosity

Use "gonogo [command] --help" for more information about a command.

Velero

Another community supported open source tool you can use is Velero, which enables you to create backups of existing clusters and then apply the backups to a new cluster. AWS resources, including IAM, are not included in a Velero backup, so you will need to recreate them.

Additional Guidance to Improve Your EKS Upgrade Process

ConfigurePodDisruptionBudgetsand topologySpreadConstraints

To make sure your workloads remain available during a data plane upgrade, you need to configure PodDisruptionBudgets and topologySpreadConstraints appropriately. Keep in mind that not all workloads demand the same level of availability, so assess your workload’s scale and requirements.

If workloads are distributed across multiple Availability Zones and hosts with topology spreads, that improves the likelihood that migrations to the new data plane will happen without disruptions.

This is an example of a workload configuration that guarantees 80% of replicas are consistently available, spreading replicas across zones and hosts efficiently:

# Source: basic-demo/templates/deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: demo-basic-demo

labels:

app.kubernetes.io/name: basic-demo

app.kubernetes.io/instance: demo

spec:

selector:

matchLabels:

app.kubernetes.io/name: basic-demo

app.kubernetes.io/instance: demo

template:

metadata:

labels:

app.kubernetes.io/name: basic-demo

app.kubernetes.io/instance: demo

spec:

topologySpreadConstraints:

- maxSkew: 1

topologyKey: kubernetes.io/hostname

whenUnsatisfiable: ScheduleAnyway

- maxSkew: 1

topologyKey: zone

whenUnsatisfiable: DoNotSchedule

containers:

- name: basic-demo

image: "quay.io/fairwinds/docker-demo:1.2.0"

imagePullPolicy: Always

ports:

- name: http

containerPort: 8080

protocol: TCP

# Source: basic-demo/templates/pdb.yaml

apiVersion: policy/v1

kind: PodDisruptionBudget

metadata:

name: demo-basic-demo

spec:

minAvailable: 80%

selector:

matchLabels:

app.kubernetes.io/name: basic-demo

app.kubernetes.io/instance: demo

AWS Resilience Hub

The AWS Resilience Hub now includes EKS as a supported resource. This provides a single place where you can define, validate, and track the resilience of your applications. This helps you avoid unnecessary downtime caused by infrastructure, software, or operational disruptions.

Use Managed Node Groups or Karpenter

Managed Node Groups and Karpenter both simplify node upgrades, taking different approaches. Managed node groups automate node provisioning and lifecycle management, which means you can create, automatically update, or terminate nodes with a single operation.

Karpenter creates new nodes automatically using the latest compatible EKS Optimized Amazon Machine Image (AMI). When EKS releases updated EKS Optimized AMIs or you upgrade the cluster, Karpenter starts using these images automatically. It also uses Node Expiry to update nodes. You can configure Karpenter to use custom AMIs, but keep in mind that if you do, you’re responsible for the version of kubelet.

Automate Upgrades for Self-Managed Node Groups

Self-managed node groups are Amazon Elastic Compute Cloud (EC2) instances that were deployed in your account and attached to the cluster outside of the EKS service. Usually, these node groups are deployed and managed by some form of automation tooling, such as eksctl, kOps, and EKS Blueprints. Refer to your tools documentation to upgrade self-managed node groups.

Back Up the Cluster

Unsurprisingly, new versions of Kubernetes introduce significant changes to your Amazon EKS cluster. Remember that once you upgrade a cluster, you can’t downgrade it. And you can only create new clusters for Kubernetes versions that are currently supported by EKS. If you are concerned about this risk, you may want to consider backing up the cluster before an upgrade.

Stay Informed about K8s Versions

Although you may feel like you only have time to focus on the current version of Kubernetes, it’s important to monitor for new releases and identify significant changes. For example, the most important change for migrating from 1.23 to 1.24 was the removal of the Dockershim from the kubelet. Dockershim was an adapter of sorts between Kubernetes and Docker. This code, embedded in the kubelet to allow the kubelet to talk to the docker daemon (even though the docker daemon was not compliant with the Open Container Initiative (OCI)) was removed in 1.24. This means the kubelet now communicates directly with the container runtime using the container runtime interface (CRI) when launching and managing containers on the nodes. EKS AMIs only have containerd as the runtime as of version 1.24. Preparing for substantial changes like these requires additional time and planning.

Review all of the documented modifications for the version you plan to upgrade to, noting any required upgrade procedures. Make sure you also pay attention to requirements or processes tailored specifically to Amazon EKS managed clusters (check the Kubernetes changelog). This approach will help you have a smoother upgrade process and minimize potential disruptions.

Important Kubernetes Changes

Below is a list of some of the most well-known changes (many of which are breaking) in Kubernetes versions, starting with v1.24. This is not a complete list; please refer to the release notes for your version of Kubernetes.

Kubernetes v1.24

- Removal of Dockershim from Kubelet. The Kubernetes blog has a great write-up on the what, how, and why.

Kubernetes v1.25

- Several deprecated APIs are no longer served. Please see the official Kubernetes Deprecated API Migration Guide.

Kubernetes v1.26

- Several deprecated APIs are no longer served. Please see the official Kubernetes Deprecated API Migration Guide.

- GlusterFS in-tree storage driver has been removed.

Kubernetes v1.27

- In-tree storage providers for AWS (and the EBS storage plugin) have been removed. You must use external CSI drivers.

Kubernetes v1.28

- In-tree CephFS volume plugin is deprecated. Please migrate to the external driver.

Kubernetes v1.29

- Only Kubeadm changes.

Major Changes for Kubernetes v1.29

- ReadWriteOncePod PersistentVolume access mode (SIG Storage)

- Node volume expansion Secret support for CSI drivers (SIG Storage)

- KMS v2 encryption at rest generally available (SIG Auth)

- Node lifecycle separated from taint management (SIG Scheduling)

- Clean up for legacy Secret-based ServiceAccount tokens (SIG Auth)

Major changes for Kubernetes v1.30

- Structured parameters for dynamic resource allocation (KEP-4381)

- Node memory swap support (KEP-2400)

- Support user namespaces in pods (KEP-127)

- Structured authorization configuration (KEP-3221)

- Container resource based pod autoscaling (KEP-1610)

- CEL for admission control (KEP-3488)

Securely Upgrading EKS Clusters

Hopefully, the information outlined in this guide is useful to you. Consistently upgrading Kubernetes requires research and effort; you need to ensure that you have time to test your environments with each minor release. If you follow these steps, you should be in good shape to undertake upgrading EKS clusters. If you need help with your next EKS upgrade, reach out. Our team has the Kubernetes expertise to make the upgrade easy for you and make your Kubernetes infrastructure more efficient at the same time, saving you time and money.

![]()

*** This is a Security Bloggers Network syndicated blog from Fairwinds | Blog authored by Stevie Caldwell. Read the original post at: https://www.fairwinds.com/blog/guide-securely-upgrading-eks-clusters

如有侵权请联系:admin#unsafe.sh