与传统的Prompt或Chaining技术相比,“Guidance”能够更有效地控制LLM语言模型。“Guidance”程序允许您将generation、prompting和业务逻辑控制交织成一 2023-7-18 11:46:0 Author: www.cnblogs.com(查看原文) 阅读量:17 收藏

与传统的Prompt或Chaining技术相比,“Guidance”能够更有效地控制LLM语言模型。

“Guidance”程序允许您将generation、prompting和业务逻辑控制交织成一个连续的pipeline流程,并与LLM模型实际处理文本的过程相匹配,例如:

- Simple output structures:如“Chain of Thought”以及相应变体(ART、Auto-CoT等)

- richer structure:GPT-4 等更强大的LLM的出现允许更丰富的结构,“Guidance”使输出该结构更容易、更便宜。

“Guidance”相比其他LLM开发框架的优势特性:

- 简单、直观的语法,基于 Handlebars 模板

- 丰富的输出结构,具有multiple generations、selections、conditionals、tool use等

- Jupyter/VSCode Notebooks中,内置类似 Playground 的流式输出方式

- 基于种子的智能生成缓存(generation caching)

- 支持基于角色的聊天模型(例如 ChatGPT)

- 与 Hugging Face 模型轻松集成,包括用于加速standard prompting的guidance acceleration、用于优化prompt boundaries的token healing、以及用于强制执行格式(enforce formats)的正则表达式模式指南(regex pattern guides)

参考链接:

https://github.com/microsoft/guidance

“Guidance”通过在notebook中将complex templates、generations串接为一个持续的prompt开发流,从而加快开发周期。

乍一看,Guidance 感觉像是一种模板语言,就像标准的 Handlebars 模板一样,您可以进行变量插值(例如,{{proverb}})和逻辑控制。 但与标准模板语言不同的是,guidance程序具有明确定义的线性执行顺序,该顺序直接对应于语言模型处理的token顺序。这意味着在执行期间的任何时候,语言模型都可以用于生成文本(使用 {{gen}} 命令)或做出逻辑控制流决策。

这种生成(generation)和提示(prompting)的交错允许开发者构建精确地prompt结构,从而更好地和业务流程融合,基于业务动态数据构建出prompt。

import guidance # set the default language model used to execute guidance programs guidance.llm = guidance.llms.OpenAI("text-davinci-003") # define a guidance program that adapts a proverb program = guidance("""Tweak this proverb to apply to model instructions instead. {{proverb}} - {{book}} {{chapter}}:{{verse}} UPDATED Where there is no guidance{{gen 'rewrite' stop="\\n-"}} - GPT {{#select 'chapter'}}9{{or}}10{{or}}11{{/select}}:{{gen 'verse'}}""") # execute the program on a specific proverb executed_program = program( proverb="Where there is no guidance, a people falls,\nbut in an abundance of counselors there is safety.", book="Proverbs", chapter=11, verse=14 )

程序执行后,所有生成的变量现在都可以轻松访问:

executed_program["rewrite"] ', a model fails,\nbut in an abundance of instructions there is safety.'

Guidance 通过在统一的角色标签(例如,{{#system}}...{{/system}})基础上,引入 API-based chat mode(如 GPT-4)或者open chat models(如 Vicuna)能力,实现一种融合了富文本模板和逻辑控制流的交互式对话开发。

# connect to a chat model like GPT-4 or Vicuna gpt4 = guidance.llms.OpenAI("gpt-4") # vicuna = guidance.llms.transformers.Vicuna("your_path/vicuna_13B", device_map="auto") experts = guidance(''' {{#system~}} You are a helpful and terse assistant. {{~/system}} {{#user~}} I want a response to the following question: {{query}} Name 3 world-class experts (past or present) who would be great at answering this? Don't answer the question yet. {{~/user}} {{#assistant~}} {{gen 'expert_names' temperature=0 max_tokens=300}} {{~/assistant}} {{#user~}} Great, now please answer the question as if these experts had collaborated in writing a joint anonymous answer. {{~/user}} {{#assistant~}} {{gen 'answer' temperature=0 max_tokens=500}} {{~/assistant}} ''', llm=gpt4) experts(query='How can I be more productive?')

当在单个 Guidance 程序中使用多个 generation 或 LLM-directed control flow statements 时,我们可以在prompt提示过程中通过重用键/值缓存来显着提高推理性能。

这意味着 guidance 只要求LLM生成下面的绿色文本,而不是整个程序。与标准生成方法相比,这将该prompt提示的运行时间缩短了一半。

# we use LLaMA here, but any GPT-style model will do llama = guidance.llms.Transformers("your_path/llama-7b", device=0) # we can pre-define valid option sets valid_weapons = ["sword", "axe", "mace", "spear", "bow", "crossbow"] # define the prompt character_maker = guidance("""The following is a character profile for an RPG game in JSON format. ```json { "id": "{{id}}", "description": "{{description}}", "name": "{{gen 'name'}}", "age": {{gen 'age' pattern='[0-9]+' stop=','}}, "armor": "{{#select 'armor'}}leather{{or}}chainmail{{or}}plate{{/select}}", "weapon": "{{select 'weapon' options=valid_weapons}}", "class": "{{gen 'class'}}", "mantra": "{{gen 'mantra' temperature=0.7}}", "strength": {{gen 'strength' pattern='[0-9]+' stop=','}}, "items": [{{#geneach 'items' num_iterations=5 join=', '}}"{{gen 'this' temperature=0.7}}"{{/geneach}}] }```""") # generate a character character_maker( id="e1f491f7-7ab8-4dac-8c20-c92b5e7d883d", description="A quick and nimble fighter.", valid_weapons=valid_weapons, llm=llama )

大多数语言模型使用的standard greedy tokenizations引入了微妙而强大的偏差,可能会对prompt提示产生各种意想不到的后果。

guidance使用一种称为“token healing”的流程,可以自动消除这些令人惊讶的偏差,使开发者能够专注于设计所需的prompt提示,而不必担心tokenization效果。

考虑以下示例,我们尝试生成 HTTP URL 字符串:

# we use StableLM as an open example, but these issues impact all models to varying degrees guidance.llm = guidance.llms.Transformers("stabilityai/stablelm-base-alpha-3b", device=0) # we turn token healing off so that guidance acts like a normal prompting library program = guidance('''The link is <a href="http:{{gen max_tokens=10 token_healing=False}}''') program()

![]()

上图中注意到一个现象,LLM 生成的输出不会使用明显的next token(http:之后的两个正斜杠)来完成 URL。 相反,它会创建一个中间有空格的无效 URL 字符串。

为什么? 因为字符串“://”是它自己的标记(1358),所以一旦模型看到它自己的冒号(标记27),它就假设接下来的字符不能是“//”; 否则,分词器不会使用 27,而是会使用 1358(“://”的标记)。

这种偏差不仅限于冒号字符,它无处不在。

上面使用的 StableLM 模型的 10k 个最常见标记中,超过 70% 是常见标记前缀token,因此当它们是提示中的最后一个标记时,会导致标记边界偏差。 例如,

- “:”标记 27 有 34 个可能的扩展

- “the”标记 1735 有 51 个扩展

- “”(空格)标记 209 有 28,802 个扩展

为了解决这个问题,guidance生成了一个token备份模型,当遇到生成前缀与最后一个令牌匹配时,使用token备份模型进行预测,以此来消除这些偏差。

这种“令牌修复”过程消除了令牌边界偏差,并允许任何提示自然完成:

guidance('The link is <a href="http:{{gen max_tokens=10}}')()

![]()

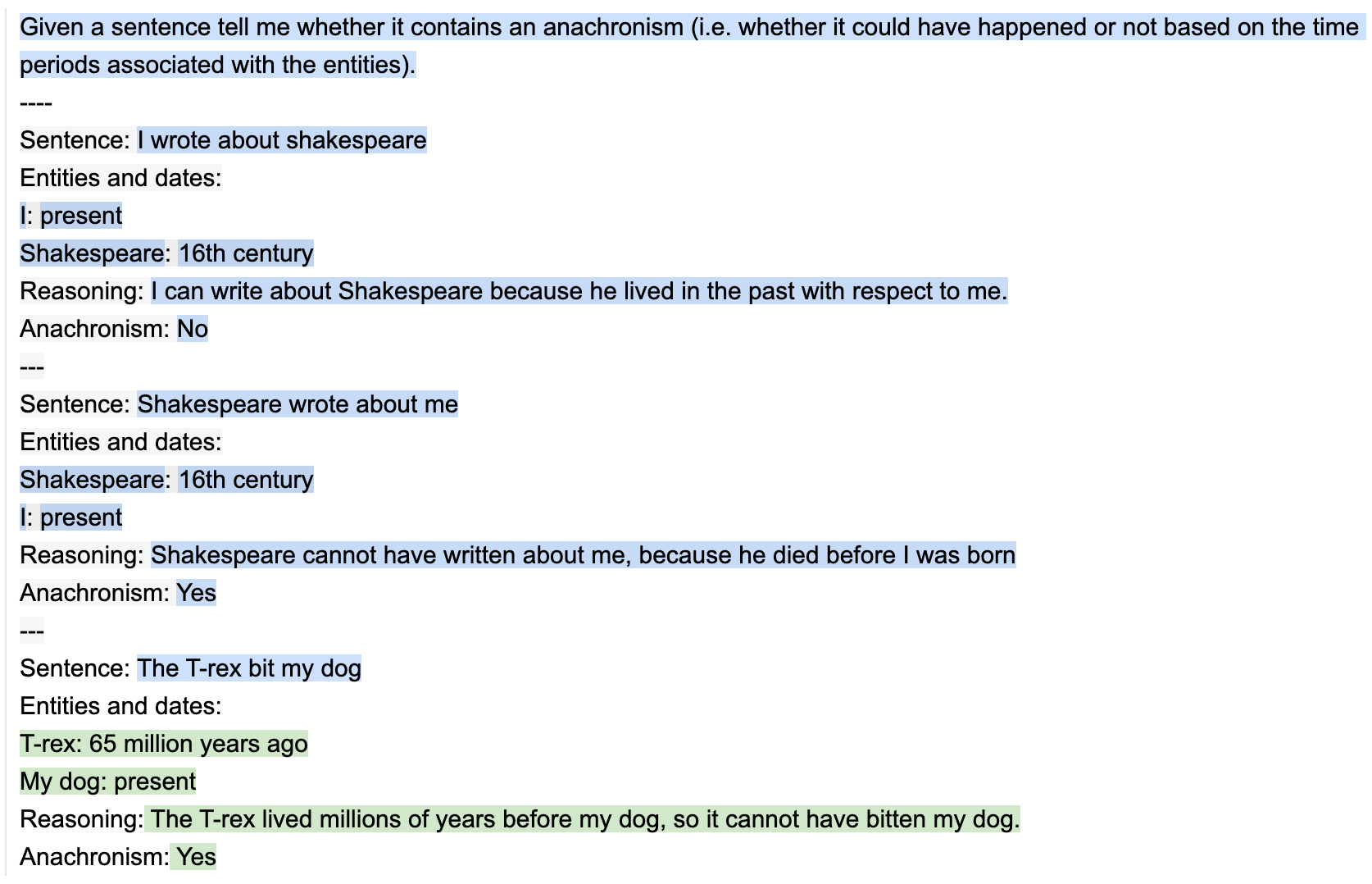

为了证明输出结构(output structure)的价值,我们从 BigBench 中获取一个简单的任务,其目标是识别给定的句子是否包含不合时宜的内容(由于时间段不重叠而不可能出现的陈述)。

下面是一个简单的two-shot prompt,其中包含一个人工设计的chain-of-thought序列。

import guidance # set the default language model used to execute guidance programs guidance.llm = guidance.llms.OpenAI("text-davinci-003") # define the few shot examples examples = [ {'input': 'I wrote about shakespeare', 'entities': [{'entity': 'I', 'time': 'present'}, {'entity': 'Shakespeare', 'time': '16th century'}], 'reasoning': 'I can write about Shakespeare because he lived in the past with respect to me.', 'answer': 'No'}, {'input': 'Shakespeare wrote about me', 'entities': [{'entity': 'Shakespeare', 'time': '16th century'}, {'entity': 'I', 'time': 'present'}], 'reasoning': 'Shakespeare cannot have written about me, because he died before I was born', 'answer': 'Yes'} ] # define the guidance program structure_program = guidance( '''Given a sentence tell me whether it contains an anachronism (i.e. whether it could have happened or not based on the time periods associated with the entities). ---- {{~! display the few-shot examples ~}} {{~#each examples}} Sentence: {{this.input}} Entities and dates:{{#each this.entities}} {{this.entity}}: {{this.time}}{{/each}} Reasoning: {{this.reasoning}} Anachronism: {{this.answer}} --- {{~/each}} {{~! place the real question at the end }} Sentence: {{input}} Entities and dates: {{gen "entities"}} Reasoning:{{gen "reasoning"}} Anachronism:{{#select "answer"}} Yes{{or}} No{{/select}}''') # execute the program out = structure_program( examples=examples, input='The T-rex bit my dog' )

对于标准 Handlebars 模板来说,也允许变量插值(例如,{{input}})和逻辑控制。

但与标准模板语言不同的是,guidance程序具有独特的线性执行顺序,该顺序直接对应于语言模型处理的标记顺序。这意味着在执行期间的任何时刻,语言模型都可以生成文本(使用 {{gen}} 命令)或做出逻辑控制流决策(使用 {{#select}}...{{or}}.. .{{/select}} 命令)。

这种生成(generation)和提示(prompting)的交错允许精确的输出结构(output structure),从而提高prompt准确性,同时还产生清晰且可解析的completions结果。

所有生成的程序变量现在都可以在执行的程序对象中使用:

项目计算了验证集的准确性,并将其与没有使用输出结构(output structure)的相同two-shot example以及最佳结果进行比较。

计算的结果与现有文献一致,因为即使与更大的LLM模型相比,即使非常简单的输出结构也能极大地提高LLM的性能。

| Model | Accuracy |

|---|---|

| Few-shot learning with guidance examples, no CoT output structure | 63.04% |

| PALM (3-shot) | Around 69% |

| Guidance | 76.01% |

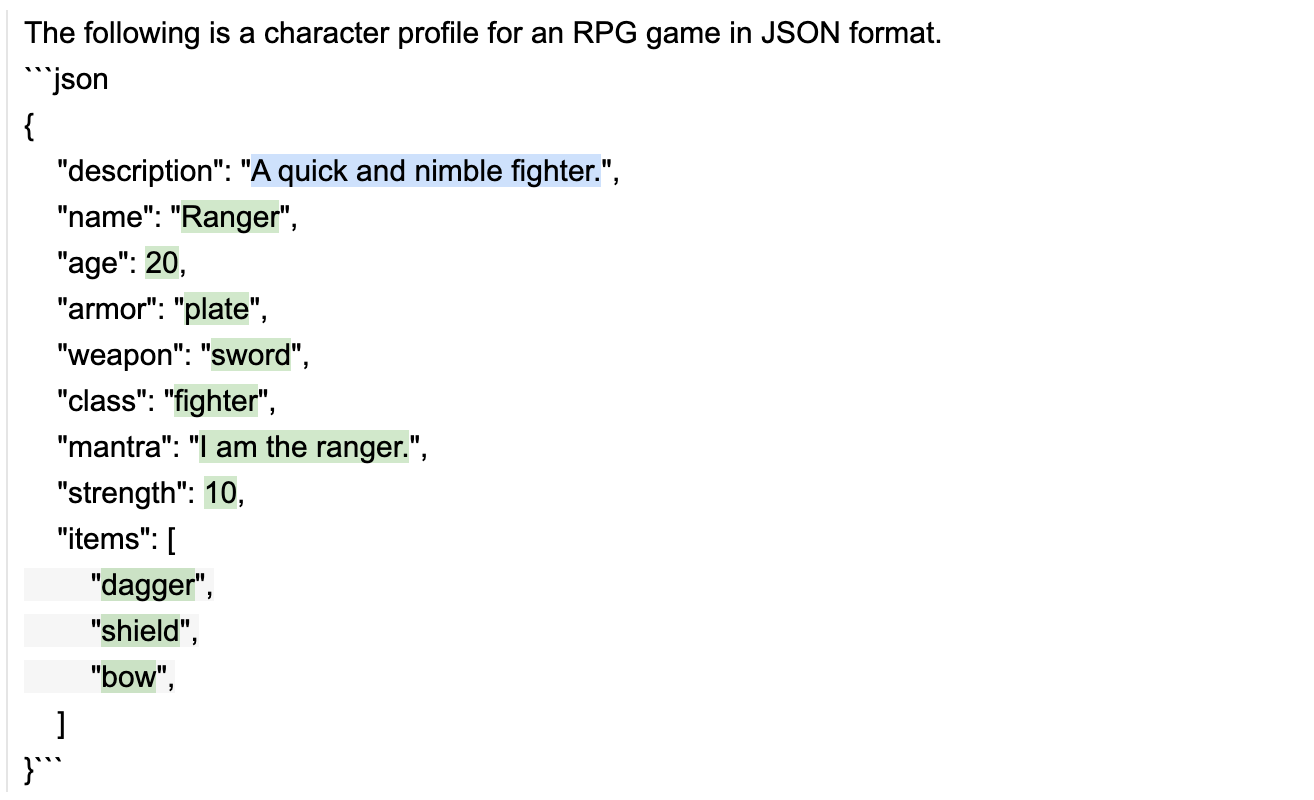

大型语言模型非常擅长生成有用的输出,但它们并不擅长保证这些输出遵循特定的格式。当我们想要使用语言模型的输出作为另一个系统的输入时,这可能会导致问题。例如,

- 如果我们想使用语言模型生成 JSON 对象,我们需要确保输出是有效的 JSON。

在guidance的帮助,我们既可以加快推理速度,又可以确保生成的 JSON 始终有效。

下面的例子中,我们每次都会生成一个具有完美语法的随机角色配置文件:

# load a model locally (we use LLaMA here) guidance.llm = guidance.llms.Transformers("your_local_path/llama-7b", device=0) # we can pre-define valid option sets valid_weapons = ["sword", "axe", "mace", "spear", "bow", "crossbow"] # define the prompt program = guidance("""The following is a character profile for an RPG game in JSON format. ```json { "description": "{{description}}", "name": "{{gen 'name'}}", "age": {{gen 'age' pattern='[0-9]+' stop=','}}, "armor": "{{#select 'armor'}}leather{{or}}chainmail{{or}}plate{{/select}}", "weapon": "{{select 'weapon' options=valid_weapons}}", "class": "{{gen 'class'}}", "mantra": "{{gen 'mantra'}}", "strength": {{gen 'strength' pattern='[0-9]+' stop=','}}, "items": [{{#geneach 'items' num_iterations=3}} "{{gen 'this'}}",{{/geneach}} ] }```""") # execute the prompt program(description="A quick and nimble fighter.", valid_weapons=valid_weapons)

# and we also have a valid Python dictionary out.variables()

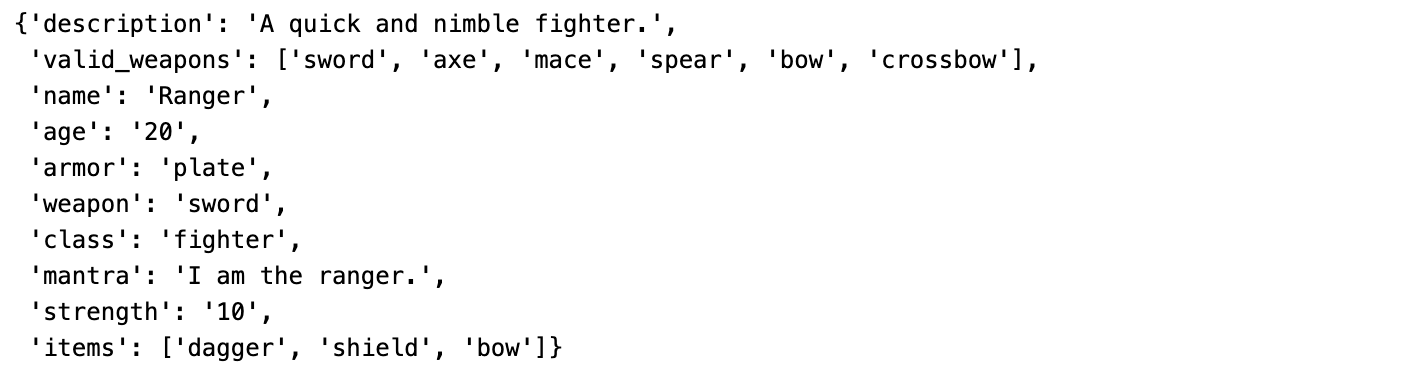

chat-style models(例如 ChatGPT 和 Alpaca)都使用了特殊tokens进行训练,这些token为prompt的不同区域标记了“角色”。

Guidance 通过角色标签(role tags)支持这些模型,这些标签自动映射到当前 LLM 的正确token或 API 调用。

下面我们将展示基于角色的guidance程序如何实现简单的多步骤推理(multi-step reasoning)和规划(planning)。

import guidance import re # we use GPT-4 here, but you could use gpt-3.5-turbo as well guidance.llm = guidance.llms.OpenAI("gpt-4") # a custom function we will call in the guidance program def parse_best(prosandcons, options): best = int(re.findall(r'Best=(\d+)', prosandcons)[0]) return options[best] # define the guidance program using role tags (like `{{#system}}...{{/system}}`) create_plan = guidance(''' {{#system~}} You are a helpful assistant. {{~/system}} {{! generate five potential ways to accomplish a goal }} {{#block hidden=True}} {{#user~}} I want to {{goal}}. {{~! generate potential options ~}} Can you please generate one option for how to accomplish this? Please make the option very short, at most one line. {{~/user}} {{#assistant~}} {{gen 'options' n=5 temperature=1.0 max_tokens=500}} {{~/assistant}} {{/block}} {{! generate pros and cons for each option and select the best option }} {{#block hidden=True}} {{#user~}} I want to {{goal}}. Can you please comment on the pros and cons of each of the following options, and then pick the best option? ---{{#each options}} Option {{@index}}: {{this}}{{/each}} --- Please discuss each option very briefly (one line for pros, one for cons), and end by saying Best=X, where X is the best option. {{~/user}} {{#assistant~}} {{gen 'prosandcons' temperature=0.0 max_tokens=500}} {{~/assistant}} {{/block}} {{! generate a plan to accomplish the chosen option }} {{#user~}} I want to {{goal}}. {{~! Create a plan }} Here is my plan: {{parse_best prosandcons options}} Please elaborate on this plan, and tell me how to best accomplish it. {{~/user}} {{#assistant~}} {{gen 'plan' max_tokens=500}} {{~/assistant}}''') # execute the program for a specific goal out = create_plan( goal='read more books', parse_best=parse_best # a custom Python function we call in the program )

这个prompt/grogram基本上经历了 3 个步骤:

- 生成一些如何实现目标的options。 请注意,我们使用 n=5 生成,这样每个选项都是单独的生成(并且不受其他选项的影响)。 我们设置temperature=1以鼓励多样性

- 分析每个选项的优点和缺点,然后选择最好的一个。 我们设置温度 = 0 以鼓励模型更加精确

- 生成最佳option的plan,并要求模型对其进行详细说明。 请注意,步骤 1 和 2 被隐藏(步骤 1 和 2 的 GPT 生成结果在guidance内部被处理了),这意味着 GPT-4 在生成最终plan时只看到了步骤 1 和 2 的最终处理结果。这种流式信息处理方式,使得LLM的生成过程具备了可编程和数据可处理型并且融入了数据分析师的专家经验,这使得模型能够专注于最关键的信息进行内容生成。

由于步骤 1 和 2 是隐藏的,因此它们不会出现在生成的输出中,但我们可以打印这些步骤生成的变量:

print('\n'.join(['Option %d: %s' % (i, x) for i, x in enumerate(out['options'])]))

Option 0: Set a goal to read for 20 minutes every day before bedtime. Option 1: Join a book club for increased motivation and accountability. Option 2: Set a daily goal to read for 20 minutes. Option 3: Set a daily reminder to read for at least 20 minutes. Option 4: Set a daily goal to read at least one chapter or 20 pages. print(out['prosandcons'])

Option 0: Pros: Establishes a consistent reading routine. Cons: May not be suitable for those with varying schedules. --- Option 1: Pros: Provides social motivation and accountability. Cons: May not align with personal reading preferences. --- Option 2: Pros: Encourages daily reading habit. Cons: Lacks a specific time frame, which may lead to procrastination. --- Option 3: Pros: Acts as a daily reminder to prioritize reading. Cons: May become repetitive and easy to ignore. --- Option 4: Pros: Sets a clear daily reading target. Cons: May be difficult to achieve on busy days or with longer chapters. Best=0

我们可以通过 await 命令轻松构建agents间相互通信、或agents与用户通信的agents代理。

wait 命令允许我们暂停执行并返回partia执行的guidance程序,通过将await放入循环中,可以一次又一次地调用partia执行的程序以形成对话框(或者任何其他结构)。

例如,以下是我们如何使用 GPT-4 来模拟两个代理之间的对话:

import guidance import re guidance.llm = guidance.llms.OpenAI("gpt-4") role_simulator = guidance(''' {{#system~}} You are a helpful assistant {{~/system}} {{#user~}} You will answer the user as {{role}} in the following conversation. At every step, I will provide you with the user input, as well as a comment reminding you of your instructions. Never talk about the fact that you are an AI, even if the user asks you. Always answer as {{role}}. {{#if first_question}}You can also start the conversation.{{/if}} {{~/user}} {{~! The assistant either starts the conversation or not, depending on if this is the first or second agent }} {{#assistant~}} Ok, I will follow these instructions. {{#if first_question}}Let me start the conversation now: {{role}}: {{first_question}}{{/if}} {{~/assistant}} {{~! Then the conversation unrolls }} {{~#geneach 'conversation' stop=False}} {{#user~}} User: {{set 'this.input' (await 'input')}} Comment: Remember, answer as a {{role}}. Start your utterance with {{role}}: {{~/user}} {{#assistant~}} {{gen 'this.response' temperature=0 max_tokens=300}} {{~/assistant}} {{~/geneach}}''') republican = role_simulator(role='Republican', await_missing=True) democrat = role_simulator(role='Democrat', await_missing=True) first_question = '''What do you think is the best way to stop inflation?''' republican = republican(input=first_question, first_question=None) democrat = democrat(input=republican["conversation"][-2]["response"].strip('Republican: '), first_question=first_question) for i in range(2): republican = republican(input=democrat["conversation"][-2]["response"].replace('Democrat: ', '')) democrat = democrat(input=republican["conversation"][-2]["response"].replace('Republican: ', '')) print('Democrat: ' + first_question) for x in democrat['conversation'][:-1]: print('Republican:', x['input']) print() print(x['response'])

参考链接:

https://github.com/microsoft/guidance

如有侵权请联系:admin#unsafe.sh